Artificial Intelligence Official Machine Learning Blog of Amazon Web Services

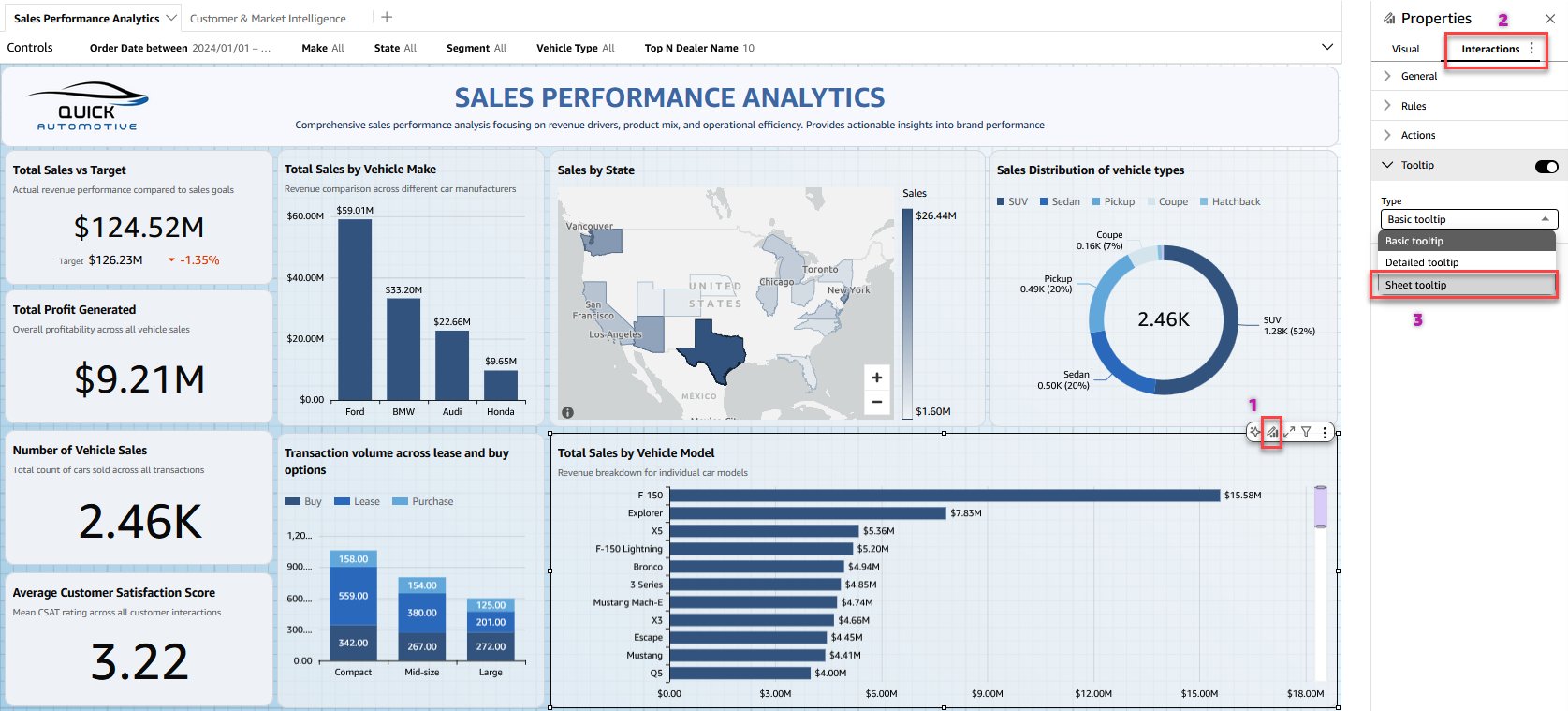

- Create rich, custom tooltips in Amazon Quick Sightby Meshan Khosla on April 15, 2026 at 3:22 pm

Today, we’re announcing sheet tooltips in Amazon Quick Sight. Dashboard authors can now design custom tooltip layouts using free-form layout sheets. These layouts combine charts, key performance indicator (KPI) metrics, text, and other visuals into a single tooltip that renders dynamically when readers hover over data points.

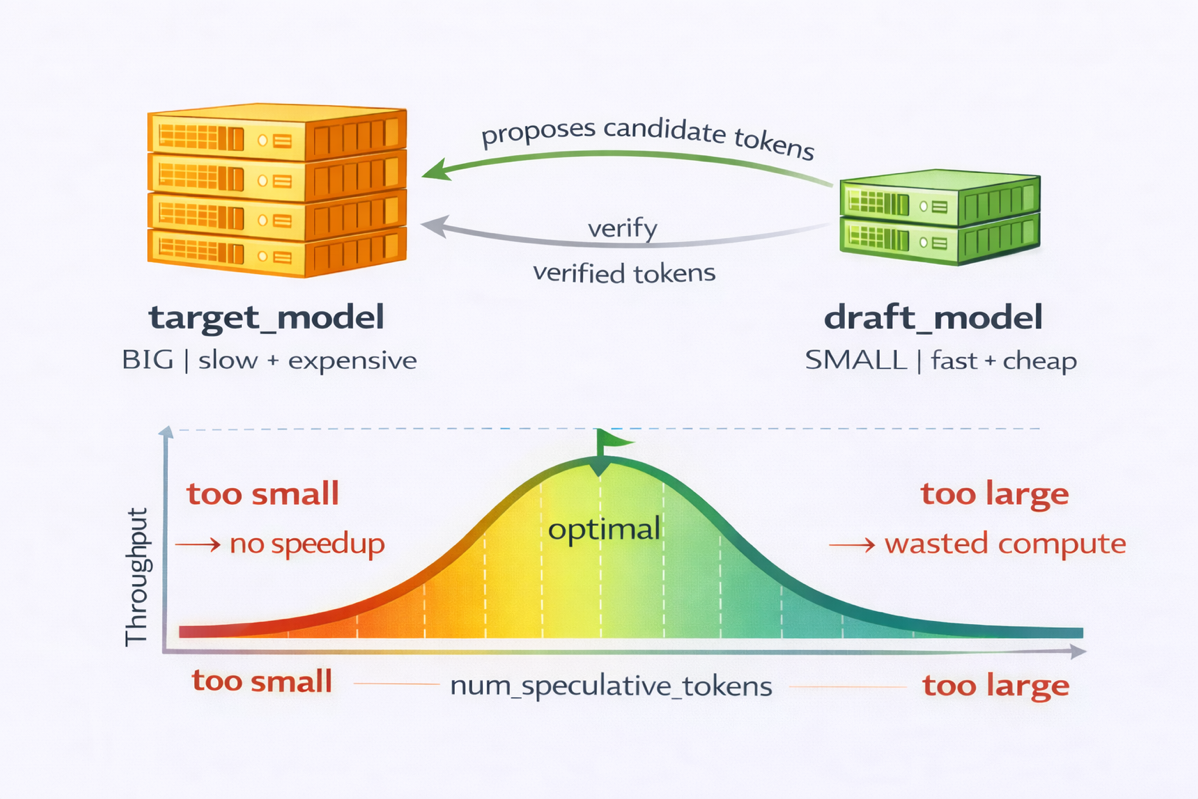

- Accelerating decode-heavy LLM inference with speculative decoding on AWS Trainium and vLLMby Yahav Biran on April 15, 2026 at 3:20 pm

In this post, you will learn how speculative decoding works and why it helps reduce cost per generated token on AWS Trainium2.

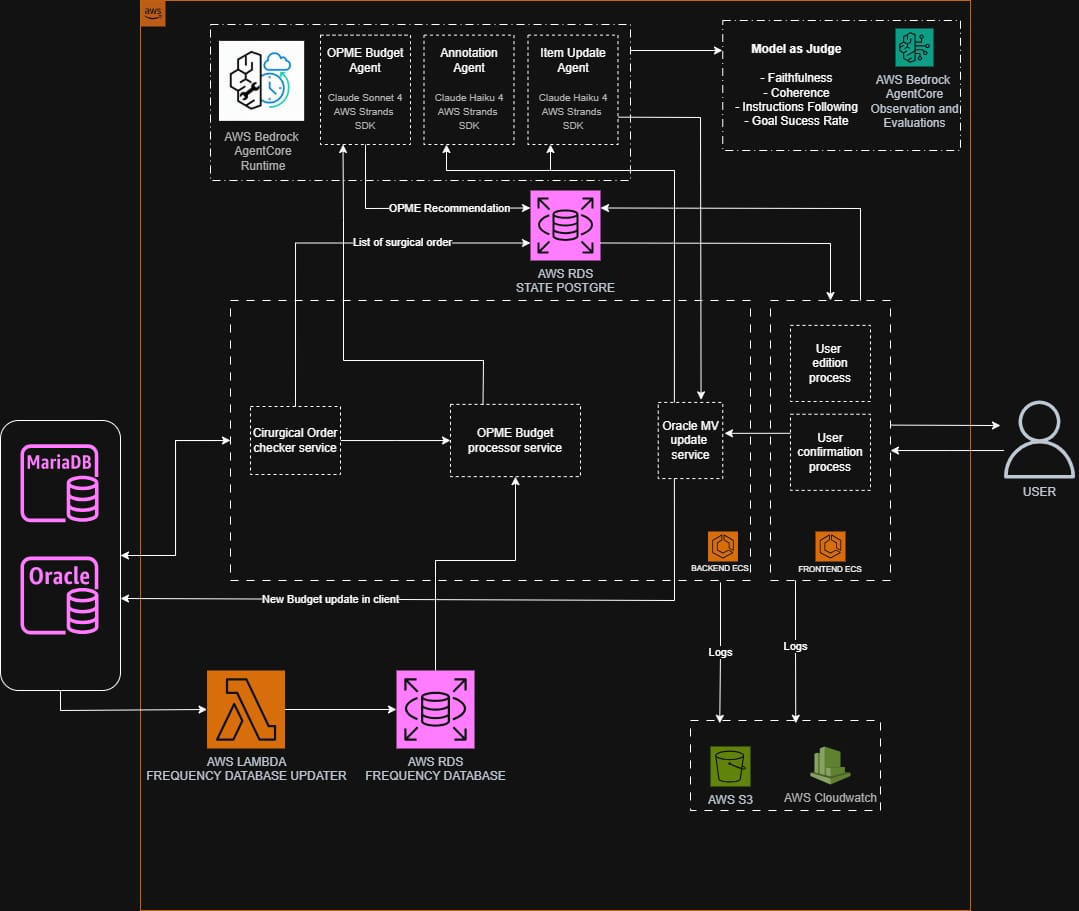

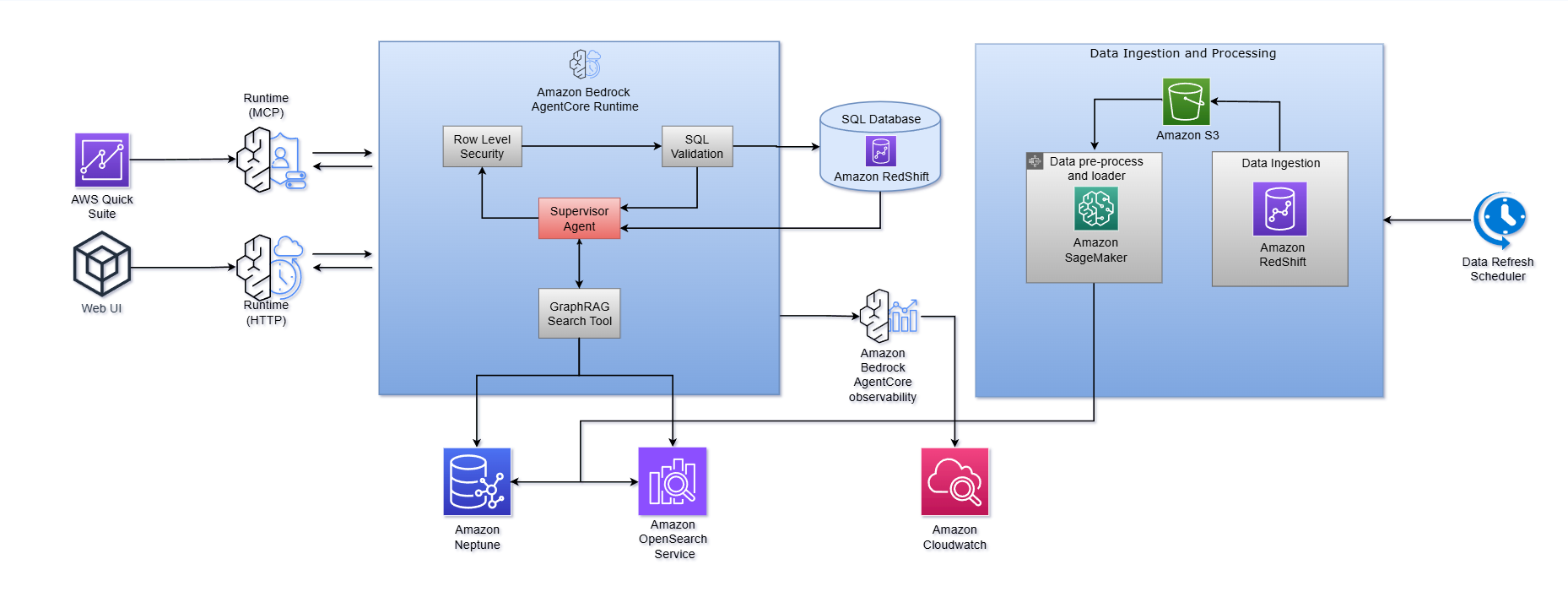

- Rede Mater Dei de Saúde: Monitoring AI agents in the revenue cycle with Amazon Bedrock AgentCoreby Renata Salvador Grande on April 15, 2026 at 3:15 pm

This post is cowritten by Renata Salvador Grande, Gabriel Bueno and Paulo Laurentys at Rede Mater Dei de Saúde. The growing adoption of multi-agent AI systems is redefining critical operations in healthcare. In large hospital networks, where thousands of decisions directly impact cash flow, service delivery times, and the risk of claim denials, the ability

- Navigating the generative AI journey: The Path-to-Value framework from AWSby Nitin Eusebius on April 14, 2026 at 6:19 pm

In this post, we introduce the Generative AI Path-to-Value (P2V) framework, a structured approach to help you move generative AI initiatives from concept to production and sustained value creation.

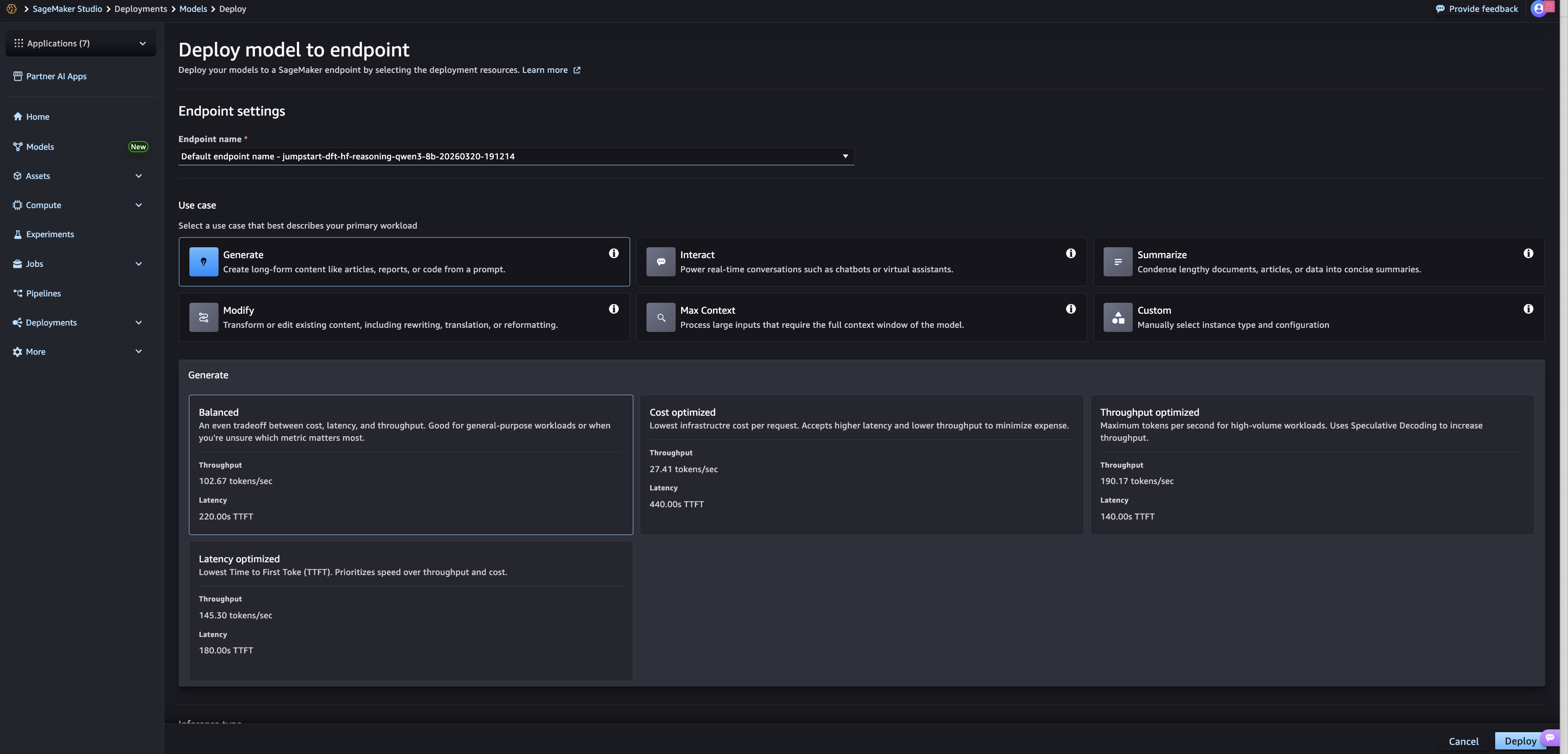

- Use-case based deployments on SageMaker JumpStartby Dan Ferguson on April 14, 2026 at 6:14 pm

We’re excited to announce the launch of Amazon SageMaker JumpStart optimized deployments. SageMaker JumpStart improved deployments address the need for rich and straightforward deployment customization on SageMaker JumpStart by offering pre-defined deployment configurations, designed for specific use cases. Customers maintain the same level of visibility into the details of their proposed deployments, but now deployments are optimized for their specific use case and performance constraint.

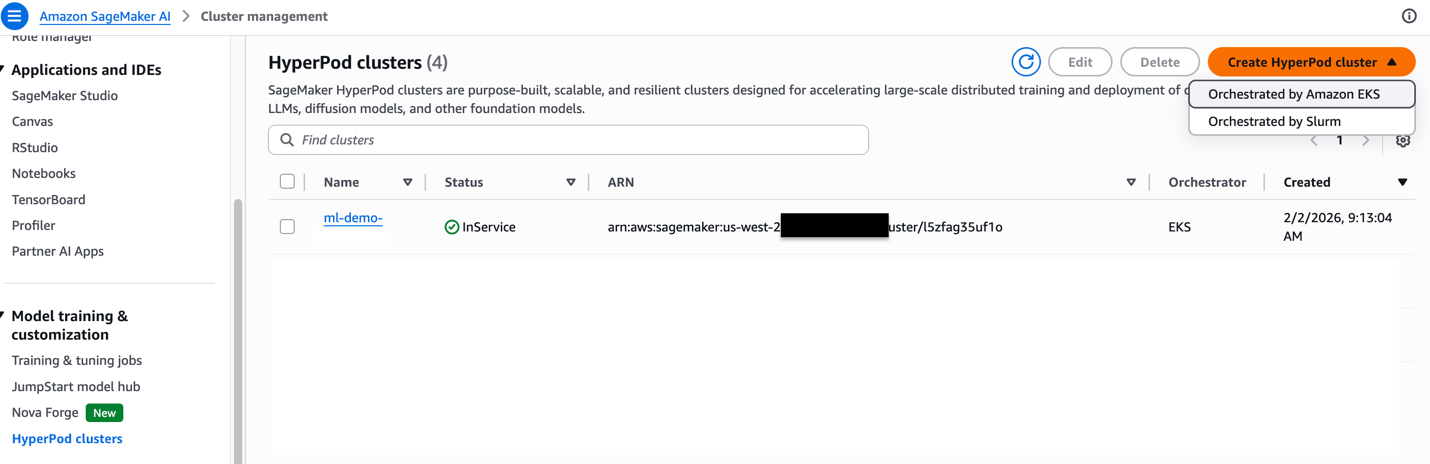

- Best practices to run inference on Amazon SageMaker HyperPodby Vinay Arora on April 14, 2026 at 6:09 pm

This post explores how Amazon SageMaker HyperPod provides a comprehensive solution for inference workloads. We walk you through the platform’s key capabilities for dynamic scaling, simplified deployment, and intelligent resource management. By the end of this post, you’ll understand how to use the HyperPod automated infrastructure, cost optimization features, and performance enhancements to reduce your total cost of ownership by up to 40% while accelerating your generative AI deployments from concept to production.

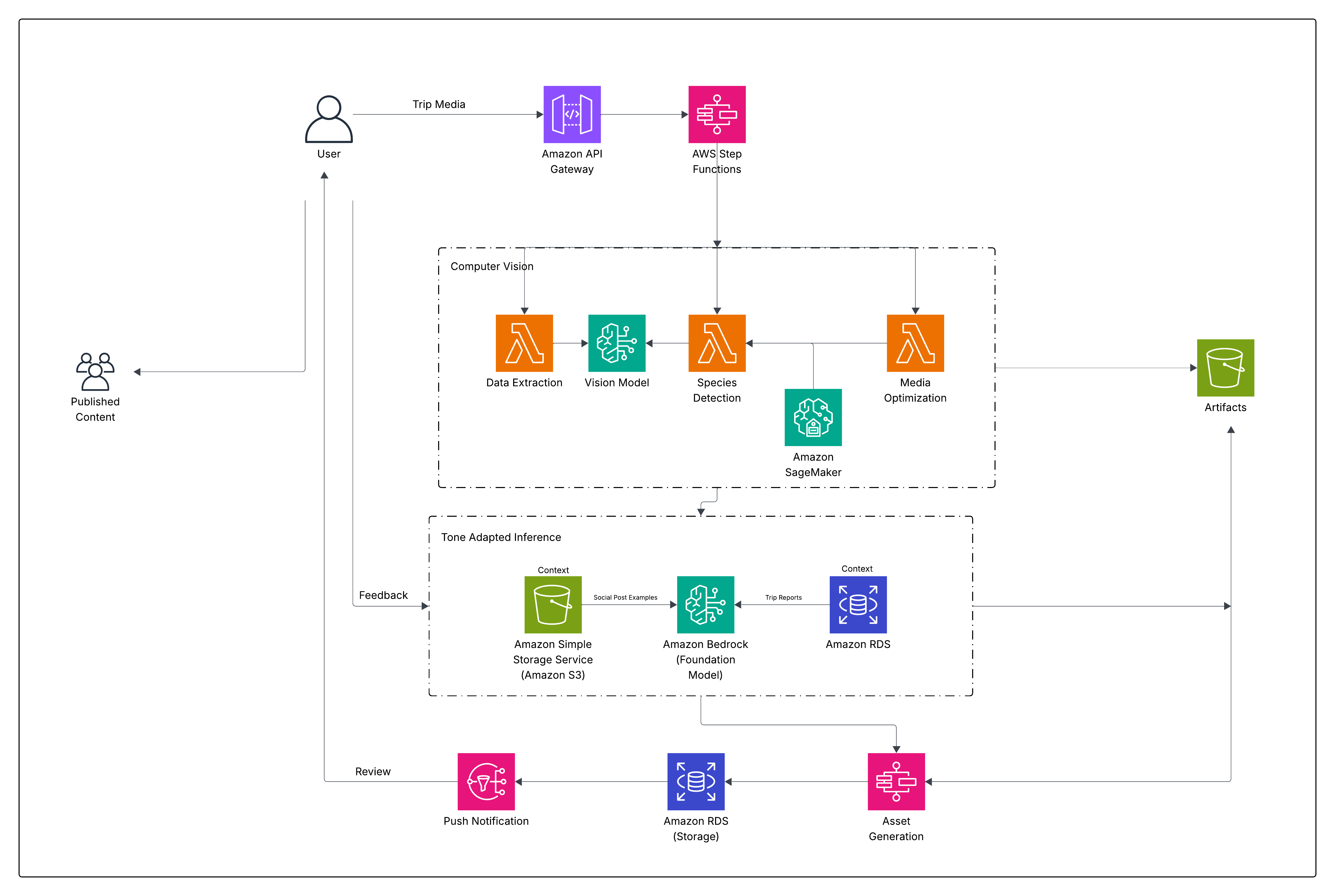

- How Guidesly built AI-generated trip reports for outdoor guides on AWSby David Lord, Taylor Lord, Shiva Prasad, Anup Banasavalli Hiriyanagowda, Nikhil Chandra on April 14, 2026 at 6:02 pm

In this post, we walk through how Guidesly built Jack AI on AWS using AWS Lambda, AWS Step Functions, Amazon Simple Storage Service (Amazon S3), Amazon Relational Database Service (Amazon RDS), Amazon SageMaker AI, and Amazon Bedrock to ingest trip media, enrich it with context, apply computer vision and generative AI, and publish marketing-ready content across multiple channels—securely, reliably, and at scale.

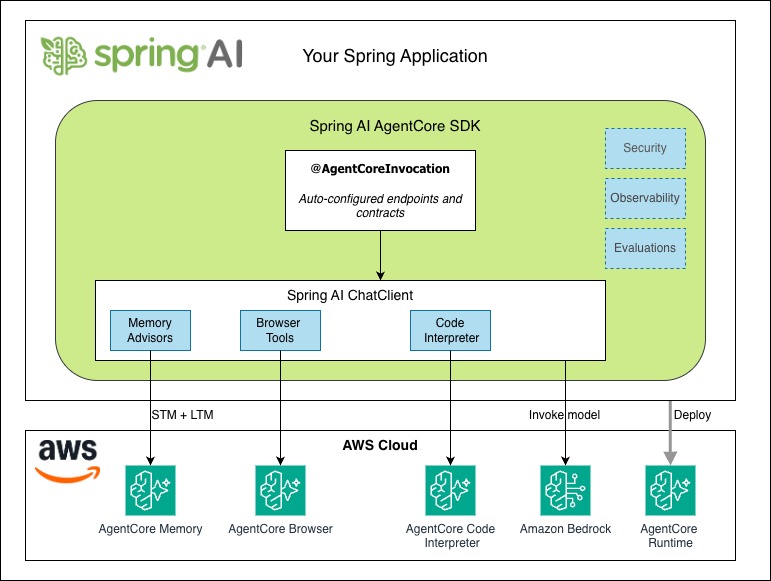

- Spring AI SDK for Amazon Bedrock AgentCore is now Generally Availableby Andrei Shakirin on April 14, 2026 at 12:40 pm

With the new Spring AI AgentCore SDK, you can build production-ready AI agents and run them on the highly scalable AgentCore Runtime. The Spring AI AgentCore SDK is an open source library that brings Amazon Bedrock AgentCore capabilities into Spring AI. In this post, we build an AI agent starting with a chat endpoint, then adding streaming responses, conversation memory, and tools for web browsing and code execution.

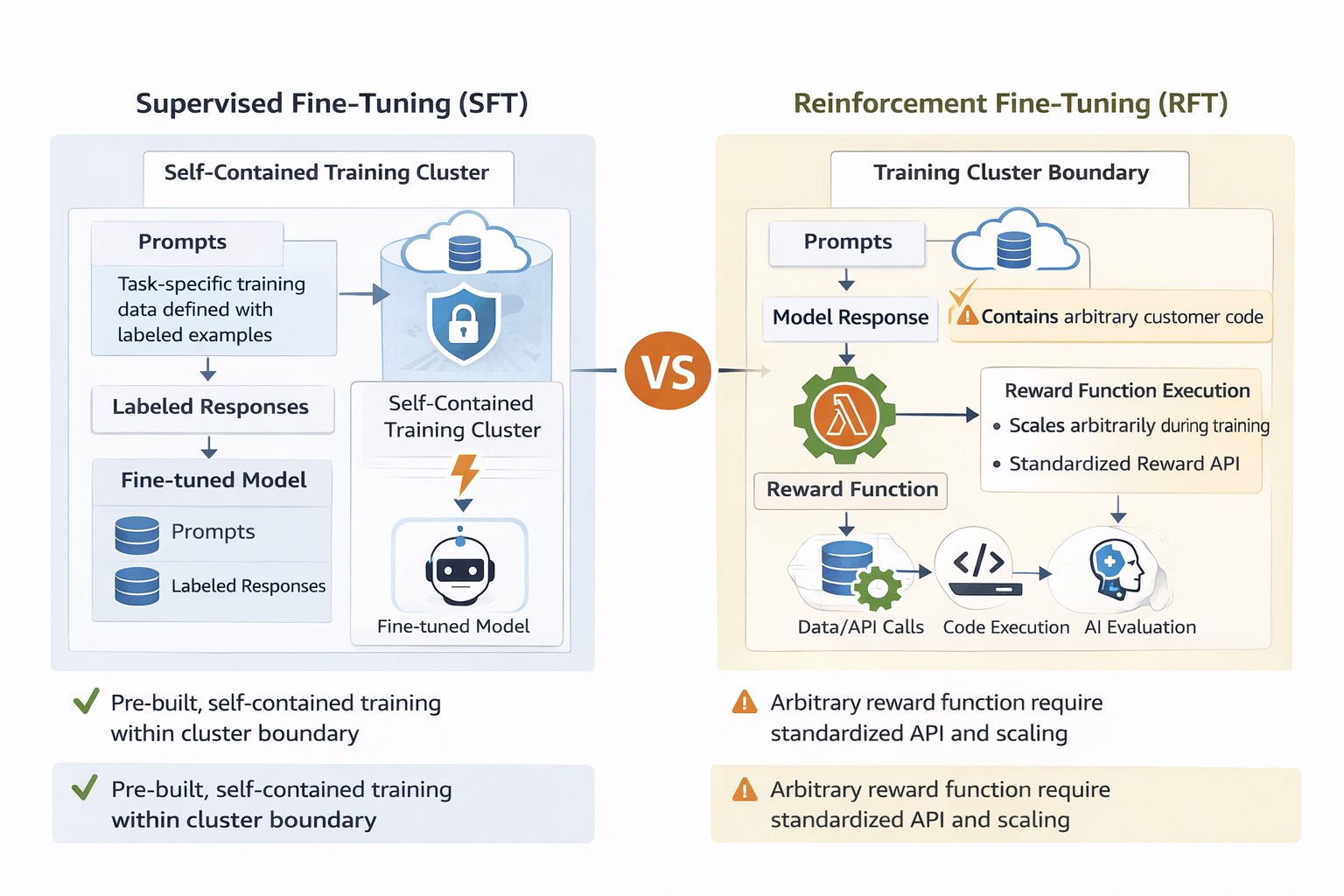

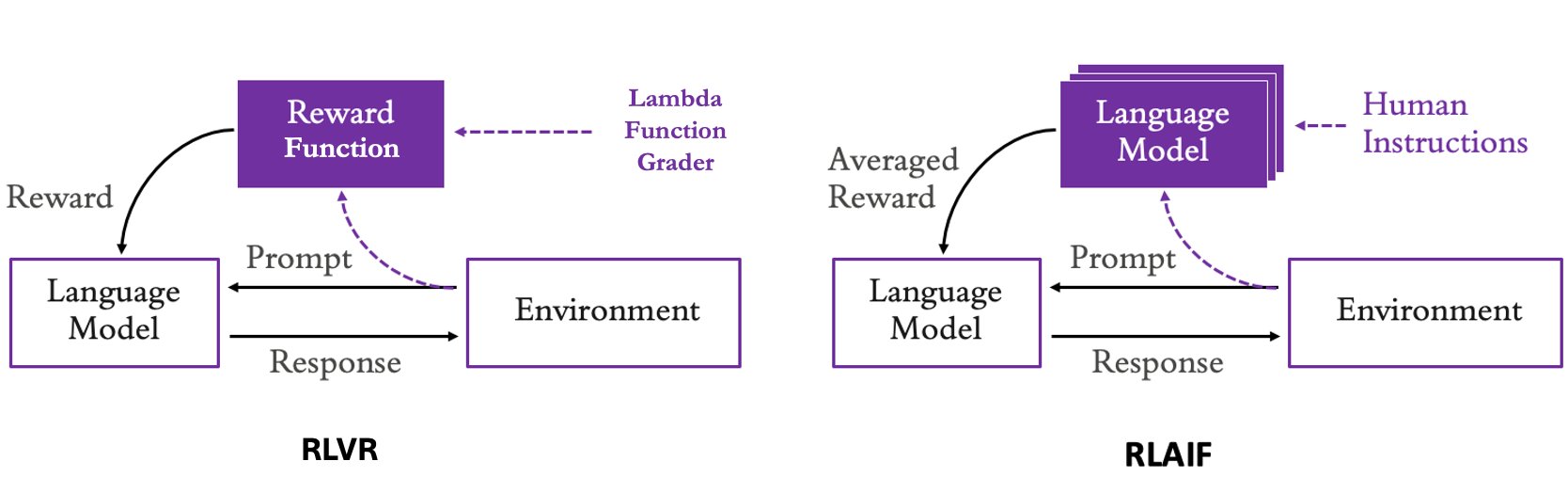

- How to build effective reward functions with AWS Lambda for Amazon Nova model customizationby Manoj Gupta on April 13, 2026 at 4:01 pm

This post demonstrates how Lambda enables scalable, cost-effective reward functions for Amazon Nova customization. You’ll learn to choose between Reinforcement Learning via Verifiable Rewards (RLVR) for objectively verifiable tasks and Reinforcement Learning via AI Feedback (RLAIF) for subjective evaluation, design multi-dimensional reward systems that help you prevent reward hacking, optimize Lambda functions for training scale, and monitor reward distributions with Amazon CloudWatch. Working code examples and deployment guidance are included to help you start experimenting.

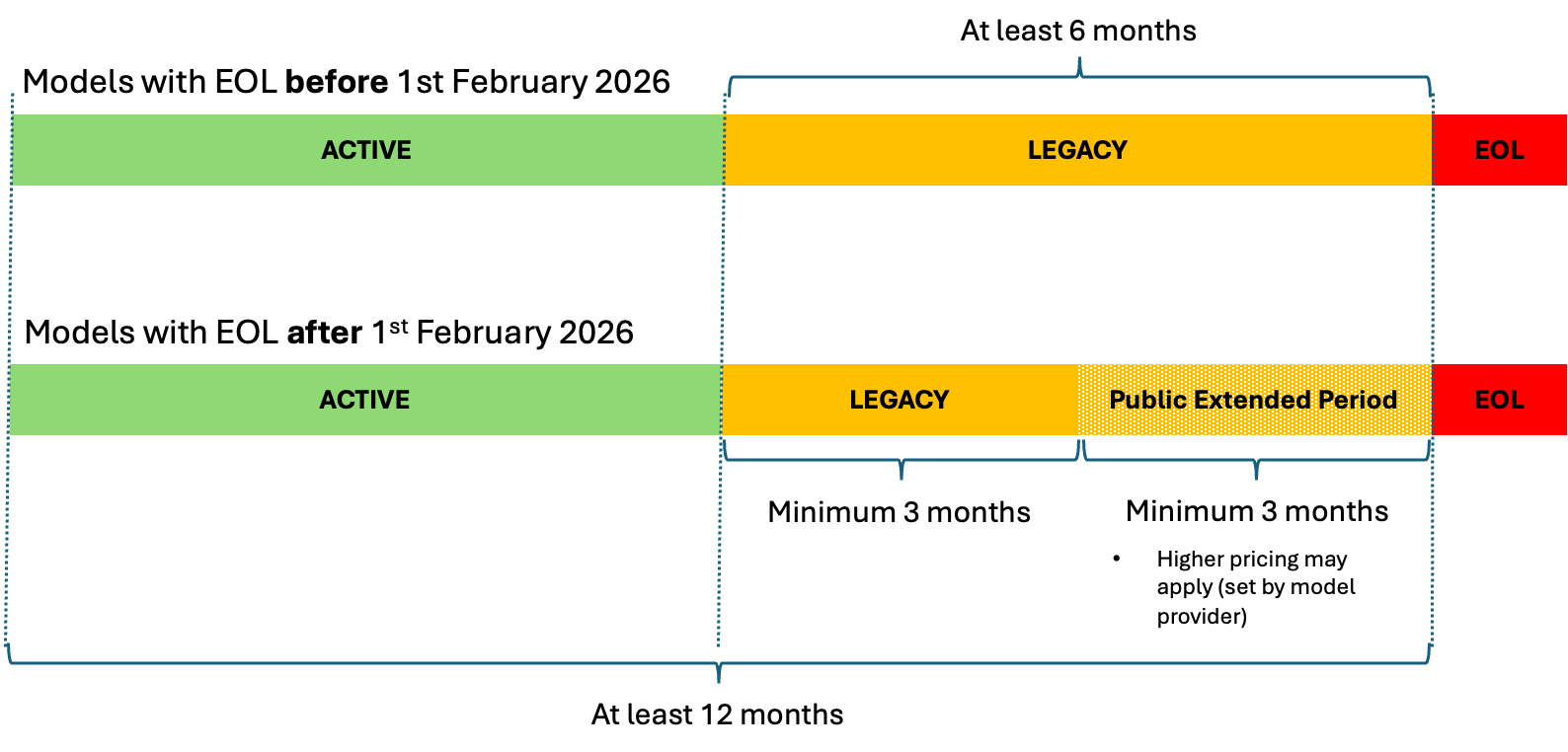

- Understanding Amazon Bedrock model lifecycleby Saurabh Trikande on April 9, 2026 at 5:33 pm

This post shows you how to manage FM transitions in Amazon Bedrock, so you can make sure your AI applications remain operational as models evolve. We discuss the three lifecycle states, how to plan migrations with the new extended access feature, and practical strategies to transition your applications to newer models without disruption.

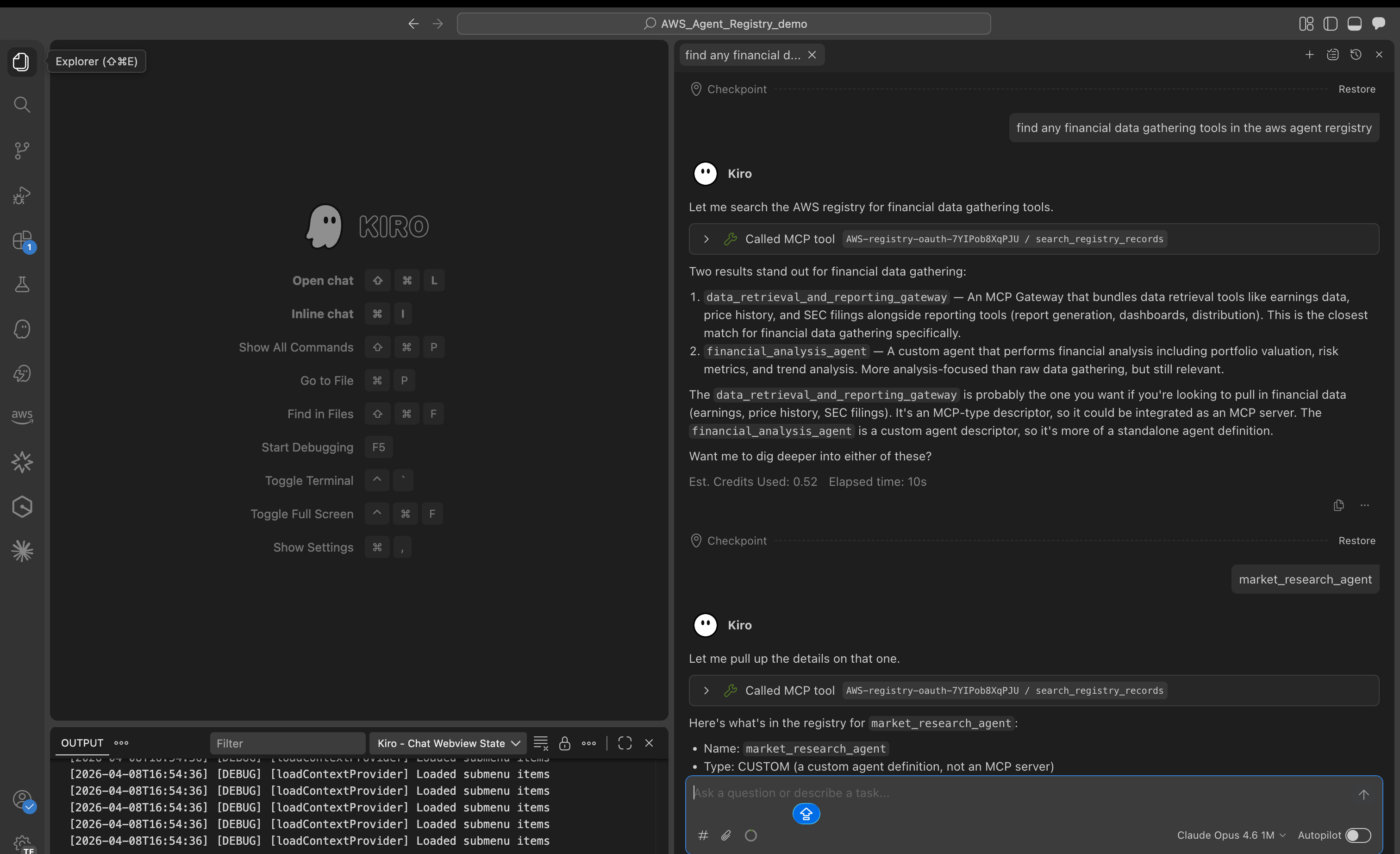

- The future of managing agents at scale: AWS Agent Registry now in previewby Preethi C N on April 9, 2026 at 5:28 pm

Today, we’re announcing AWS Agent Registry (preview) in AgentCore, a single place to discover, share, and reuse AI agents, tools, and agent skills across your enterprise.

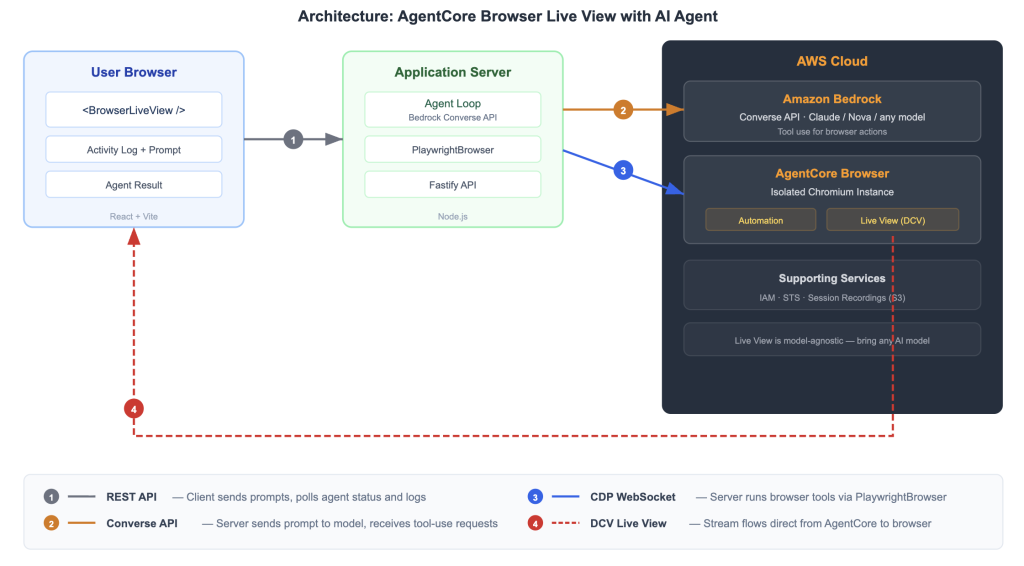

- Embed a live AI browser agent in your React app with Amazon Bedrock AgentCoreby Sundar Raghavan on April 9, 2026 at 5:06 pm

This post walks you through three steps: starting a session and generating the Live View URL, rendering the stream in your React application, and wiring up an AI agent that drives the browser while your users watch. At the end, you will have a working sample application you can clone and run.

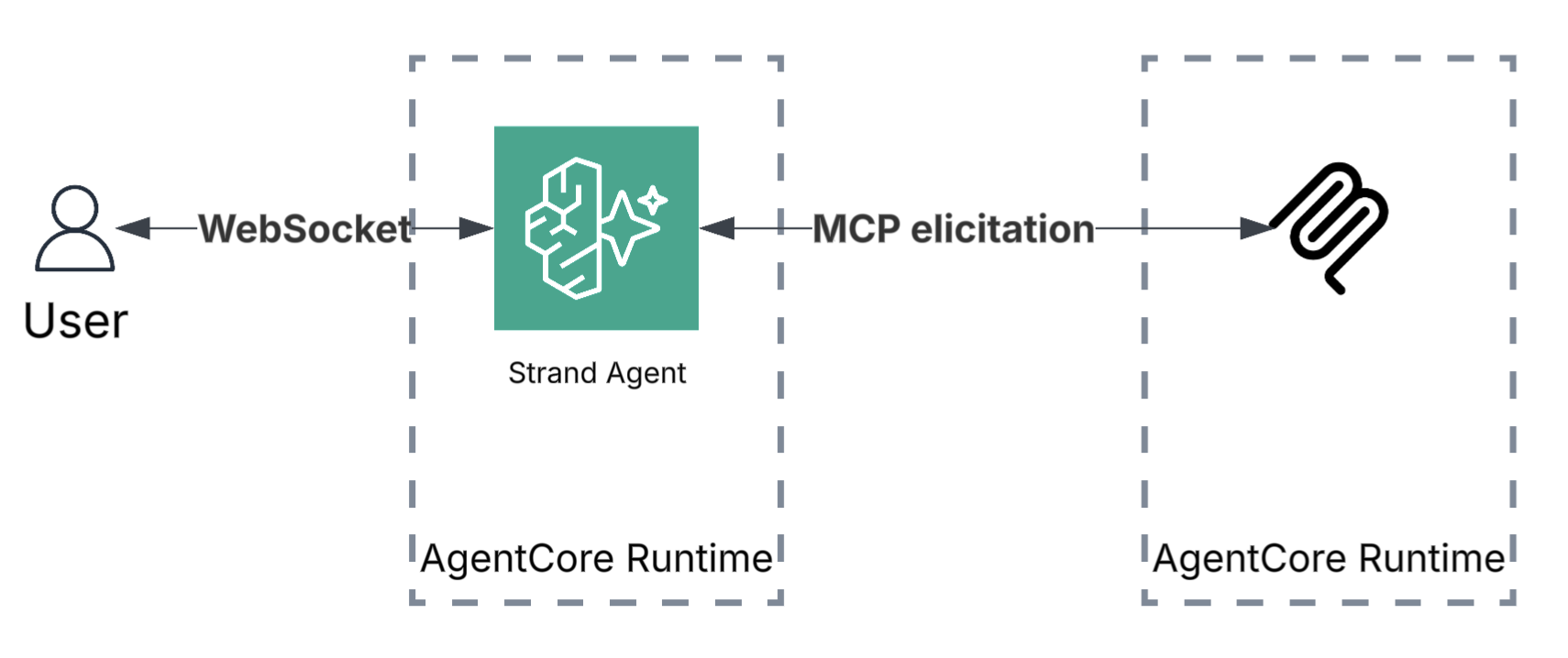

- Introducing stateful MCP client capabilities on Amazon Bedrock AgentCore Runtimeby Evandro Franco on April 9, 2026 at 2:47 pm

In this post, you will learn how to build stateful MCP servers that request user input during execution, invoke LLM sampling for dynamic content generation, and stream progress updates for long-running tasks. You will see code examples for each capability and deploy a working stateful MCP server to Amazon Bedrock AgentCore Runtime.

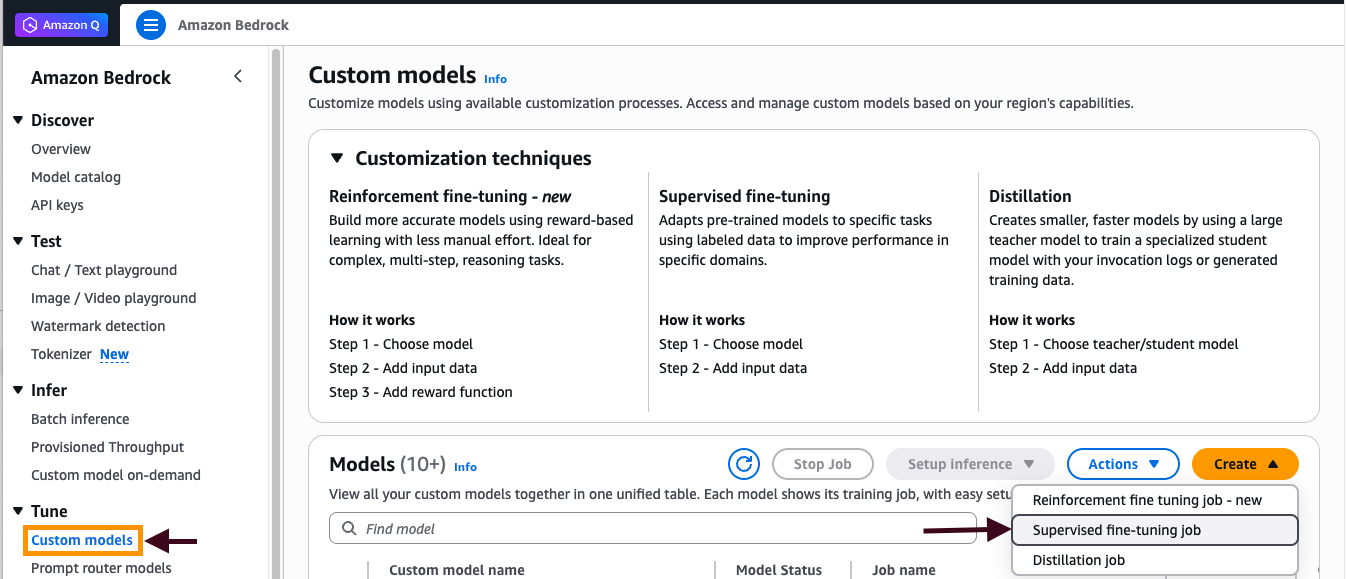

- Customize Amazon Nova models with Amazon Bedrock fine-tuningby Bhavya Sruthi Sode on April 8, 2026 at 7:51 pm

In this post, we’ll walk you through a complete implementation of model fine-tuning in Amazon Bedrock using Amazon Nova models, demonstrating each step through an intent classifier example that achieves superior performance on a domain specific task. Throughout this guide, you’ll learn to prepare high-quality training data that drives meaningful model improvements, configure hyperparameters to optimize learning without overfitting, and deploy your fine-tuned model for improved accuracy and reduced latency. We’ll show you how to evaluate your results using training metrics and loss curves.

- Human-in-the-loop constructs for agentic workflows in healthcare and life sciencesby Pierre de Malliard on April 8, 2026 at 7:48 pm

In healthcare and life sciences, AI agents help organizations process clinical data, submit regulatory filings, automate medical coding, and accelerate drug development and commercialization. However, the sensitive nature of healthcare data and regulatory requirements like Good Practice (GxP) compliance require human oversight at key decision points. This is where human-in-the-loop (HITL) constructs become essential. In this post, you will learn four practical approaches to implementing human-in-the-loop constructs using AWS services.

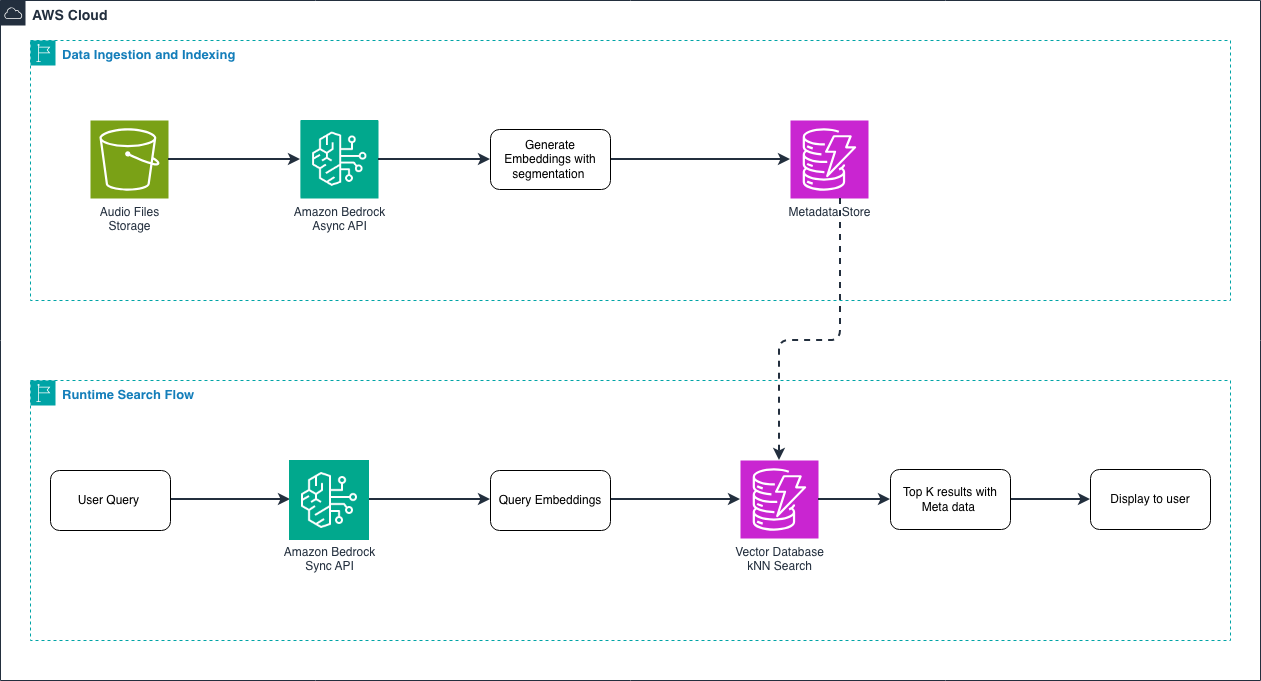

- Building intelligent audio search with Amazon Nova Embeddings: A deep dive into semantic audio understandingby Madhavi Evana on April 8, 2026 at 7:45 pm

This post walks you through understanding audio embeddings, implementing Amazon Nova Multimodal Embeddings, and building a practical search system for your audio content. You’ll learn how embeddings represent audio as vectors, explore the technical capabilities of Amazon Nova, and see hands-on code examples for indexing and querying your audio libraries. By the end, you’ll have the knowledge to deploy production-ready audio search capabilities.

- Reinforcement fine-tuning on Amazon Bedrock: Best practicesby Nick McCarthy on April 8, 2026 at 7:43 pm

In this post, we explore where RFT is most effective, using the GSM8K mathematical reasoning dataset as a concrete example. We then walk through best practices for dataset preparation and reward function design, show how to monitor training progress using Amazon Bedrock metrics, and conclude with practical hyperparameter tuning guidelines informed by experiments across multiple models and use cases.

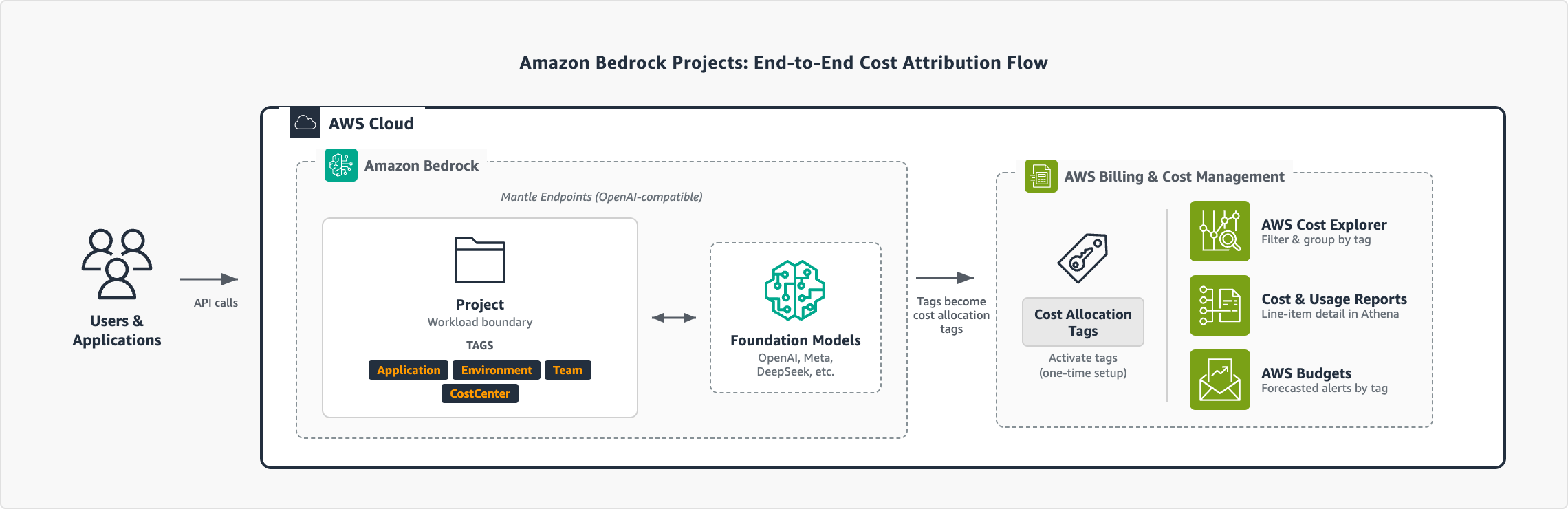

- Manage AI costs with Amazon Bedrock Projectsby Ba’Carri Johnson on April 7, 2026 at 11:32 pm

With Amazon Bedrock Projects, you can attribute inference costs to specific workloads and analyze them in AWS Cost Explorer and AWS Data Exports. In this post, you will learn how to set up Projects end-to-end, from designing a tagging strategy to analyzing costs.

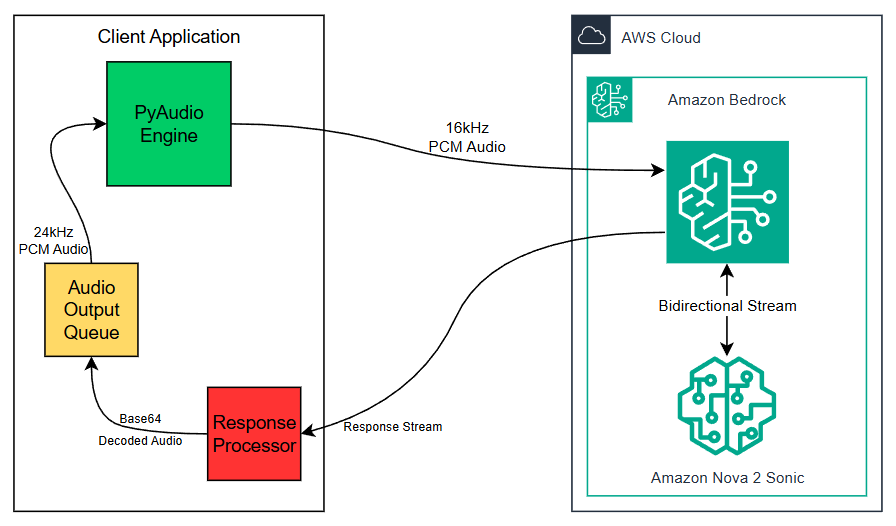

- Building real-time conversational podcasts with Amazon Nova 2 Sonicby Madhavi Evana on April 7, 2026 at 4:29 pm

This post walks through building an automated podcast generator that creates engaging conversations between two AI hosts on any topic, demonstrating the streaming capabilities of Nova Sonic, stage-aware content filtering, and real-time audio generation.

- Text-to-SQL solution powered by Amazon Bedrockby Monica Jain on April 7, 2026 at 4:28 pm

In this post, we show you how to build a natural text-to-SQL solution using Amazon Bedrock that transforms business questions into database queries and returns actionable answers.