AWS Big Data Blog Official Big Data Blog of Amazon Web Services

- Amazon OpenSearch Serverless introduces collection groups to optimize cost for multi-tenant workloadsby Madhusudhan Narayana on February 26, 2026 at 4:36 pm

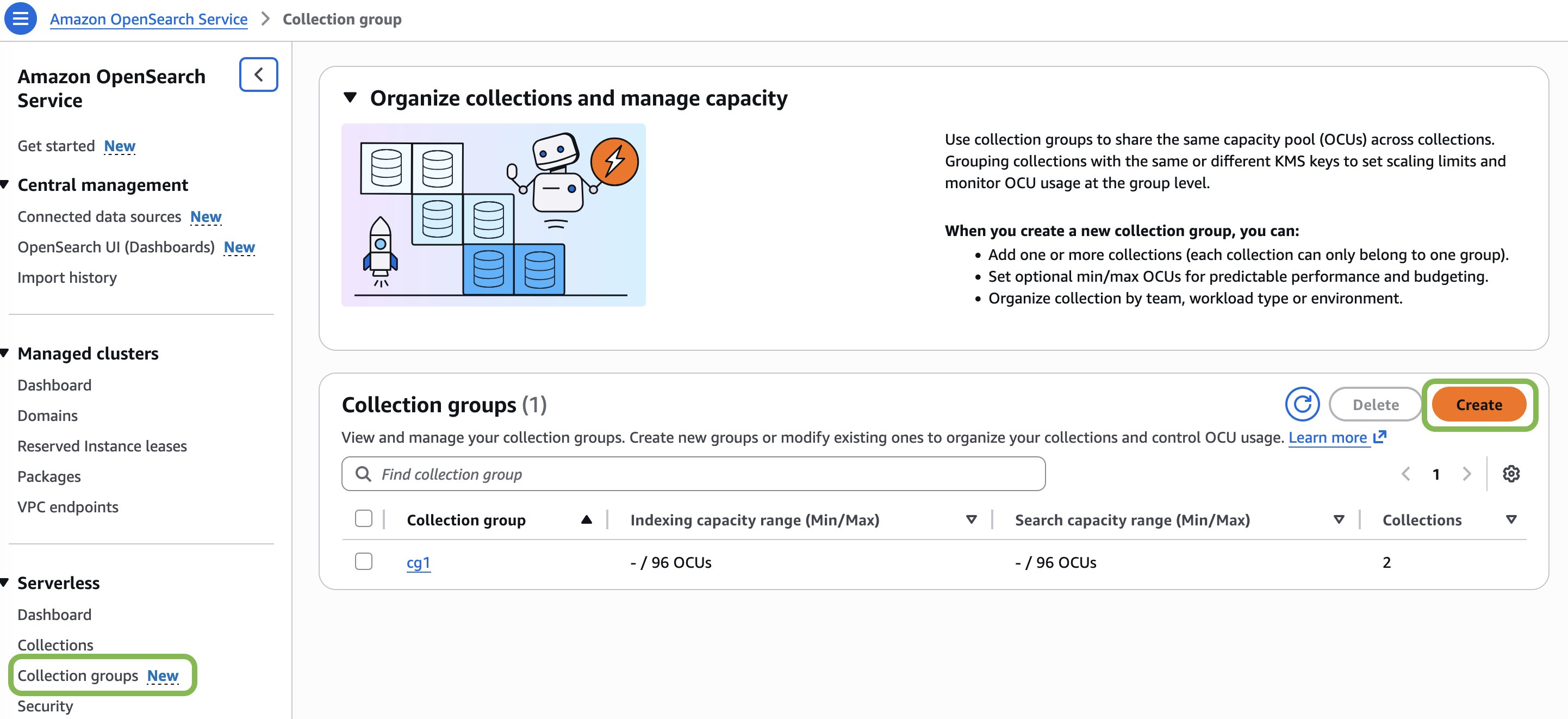

Today, we’re excited to announce the general availability of the collection groups feature for Amazon OpenSearch Serverless. With this feature you can reduce compute costs for multi-tenant workloads while creating secure tenant boundaries through per-tenant encryption, giving you the flexibility to balance cost efficiency with the exact level of isolation and security your applications requires.

- Improving order history search using semantic search with Amazon OpenSearch Serviceby Shwetabh . on February 26, 2026 at 4:34 pm

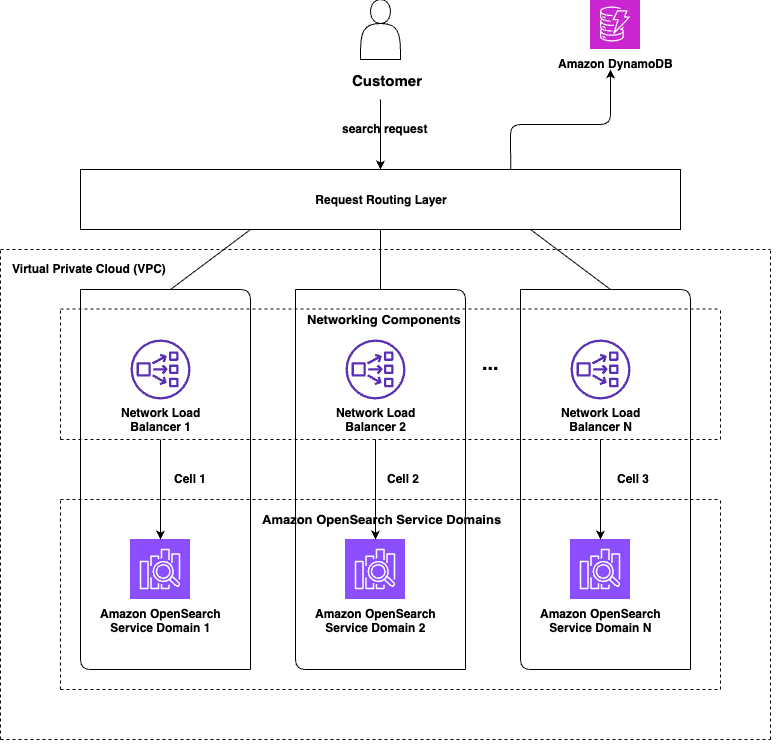

If you’ve ever shopped on Amazon, you’ve used Your Orders. This feature maintains your complete order history dating back to 1995, so you can track and manage every purchase you’ve made. The order history search feature lets you find your past purchases by entering keywords in the search bar. Beyond just finding items, it provides a straightforward way to repurchase the same or similar items, saving you time and effort. In this post, we show you how the Your Orders team improved order history search by introducing semantic search capabilities on top of our existing lexical search system, using Amazon OpenSearch Service and Amazon SageMaker.

- How Swiss Life Germany automated data governance and collaboration with Amazon SageMakerby Tim Kopacz on February 25, 2026 at 8:28 pm

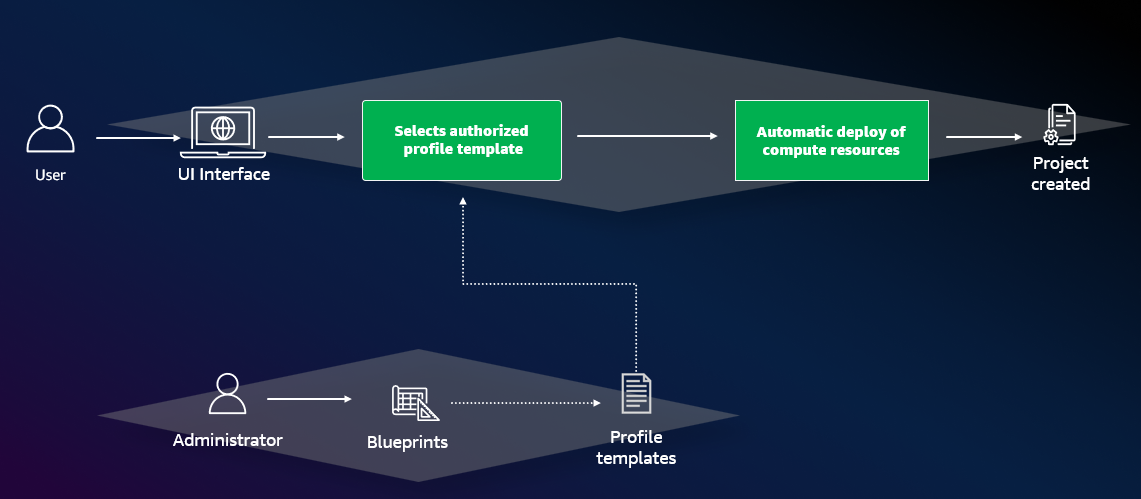

Swiss Life Germany, a leading provider of customized pension products with over 100 years of experience, recently transitioned from legacy on-premises infrastructure to a modern cloud architecture. To enable secure data sharing and cross-departmental collaboration in this regulated environment, they implemented Amazon SageMaker with a custom Terraform pattern. This post demonstrates how Swiss Life Germany aligned SageMaker’s agility with their rigorous infrastructure as code standards, providing a blueprint for platform engineers and data architects in highly regulated enterprises.

- Implement a data mesh pattern in Amazon SageMaker Catalog without changing applicationsby Paolo Romagnoli on February 23, 2026 at 7:41 pm

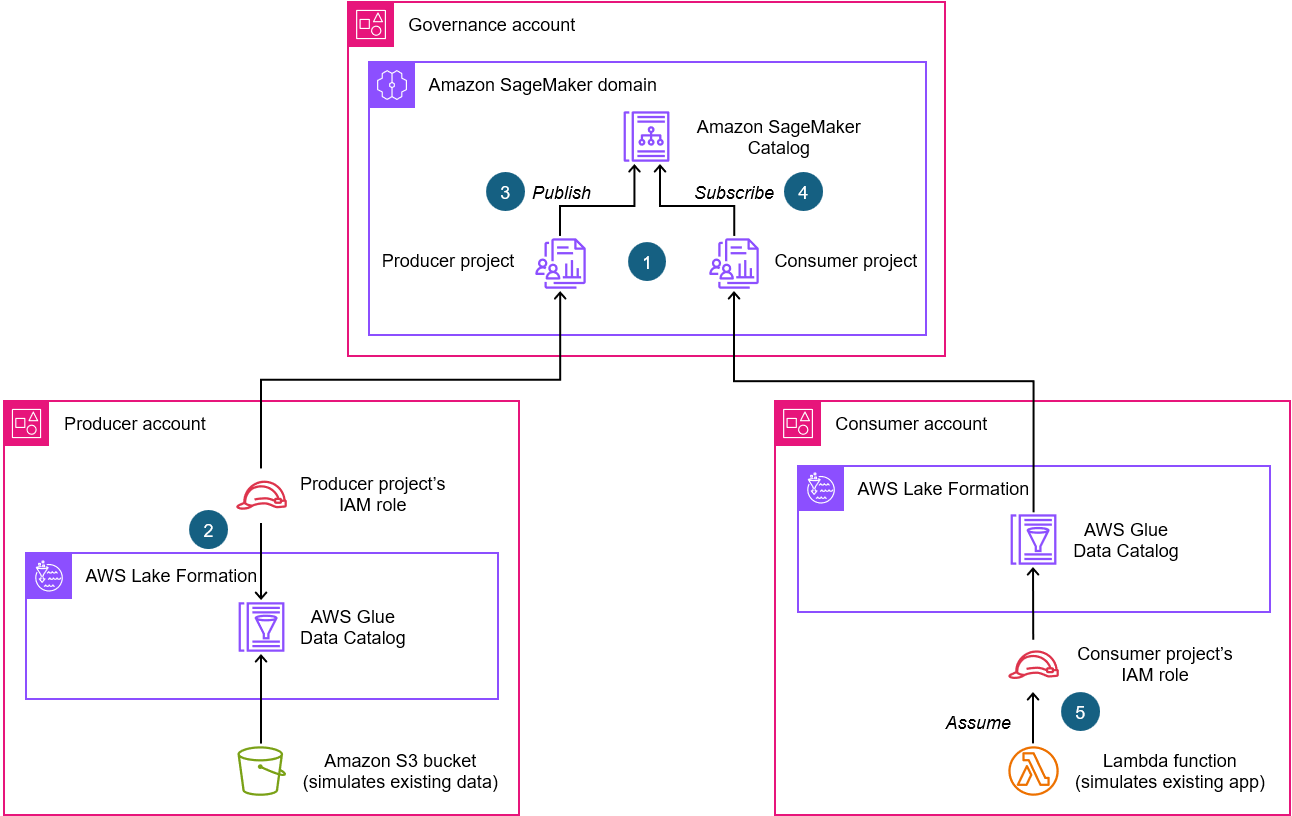

In this post, we walk through simulating a scenario based on data producer and data consumer that exists before Amazon SageMaker Catalog adoption. We use a sample dataset to simulate existing data and an existing application using an AWS Lambda function, then implement a data mesh pattern using Amazon SageMaker Catalog while keeping your current data repositories and consumer applications unchanged.

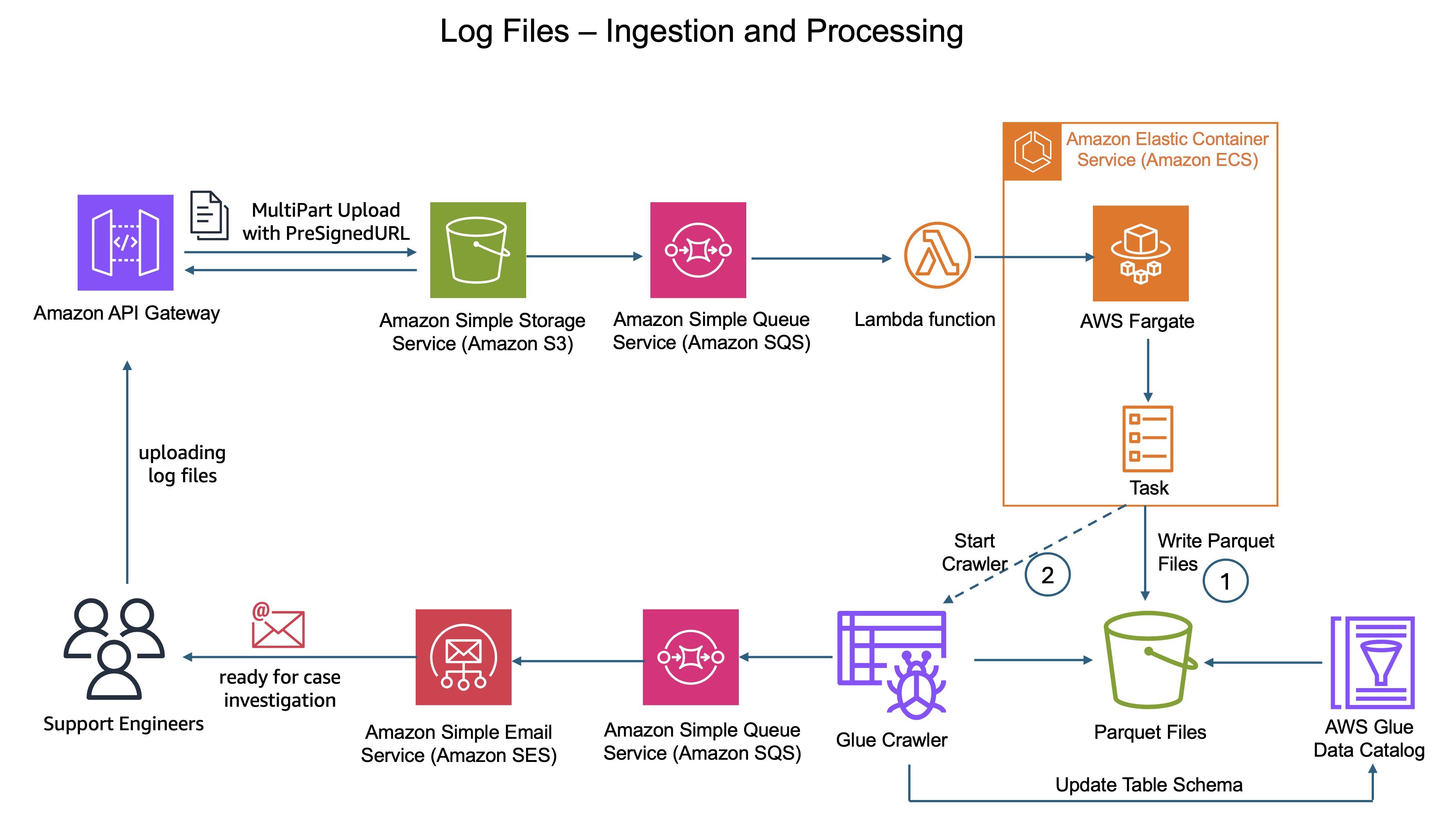

- How CyberArk uses Apache Iceberg and Amazon Bedrock to deliver up to 4x support productivityby Moshiko Ben Abu on February 18, 2026 at 8:17 pm

CyberArk is a global leader in identity security. Centered on intelligent privilege controls, it provides comprehensive security for human, machine, and AI identities across business applications, distributed workforces, and hybrid cloud environments. In this post, we show you how CyberArk redesigned their support operations by combining Iceberg’s intelligent metadata management with AI-powered automation from Amazon Bedrock. You’ll learn how to simplify data processing flows, automate log parsing for diverse formats, and build autonomous investigation workflows that scale automatically.

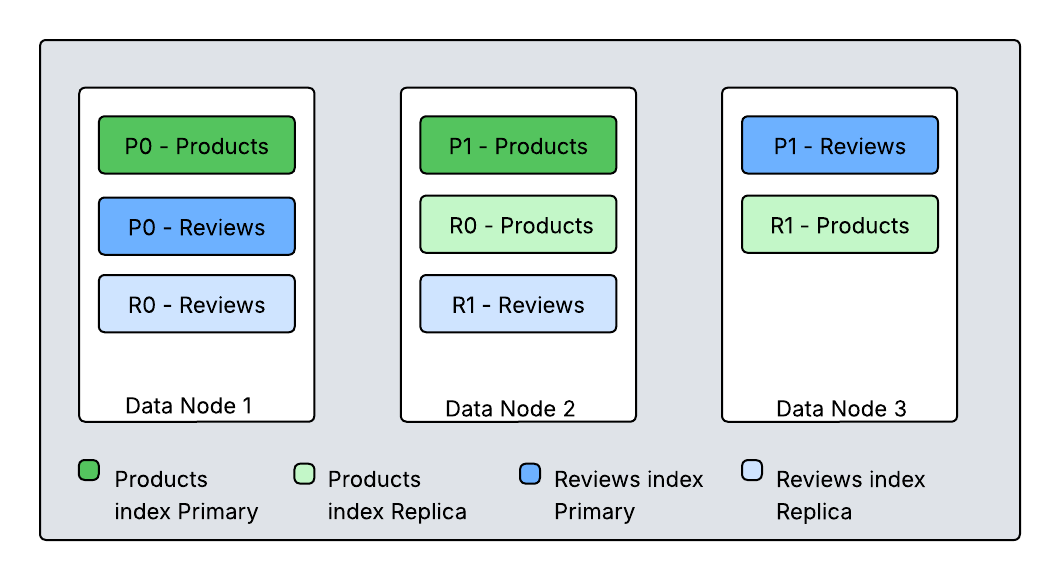

- Best practices for right-sizing Amazon OpenSearch Service domainsby Nikhil Agarwal on February 18, 2026 at 8:16 pm

In this post, we guide you through the steps to determine if your OpenSearch Service domain is right-sized, using AWS tools and best practices to optimize your configuration for workloads like log analytics, search, vector search, or synthetic data testing.

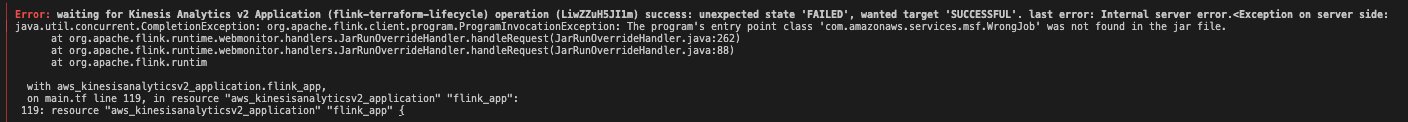

- Amazon Managed Service for Apache Flink application lifecycle management with Terraform by Felix John on February 17, 2026 at 5:49 pm

In this post, you’ll learn how to use Terraform to automate and streamline your Apache Flink application lifecycle management on Amazon Managed Service for Apache Flink. We’ll walk you through the complete lifecycle including deployment, updates, scaling, and troubleshooting common issues. This post builds upon our two-part blog series “Deep dive into the Amazon Managed Service for Apache Flink application lifecycle”.

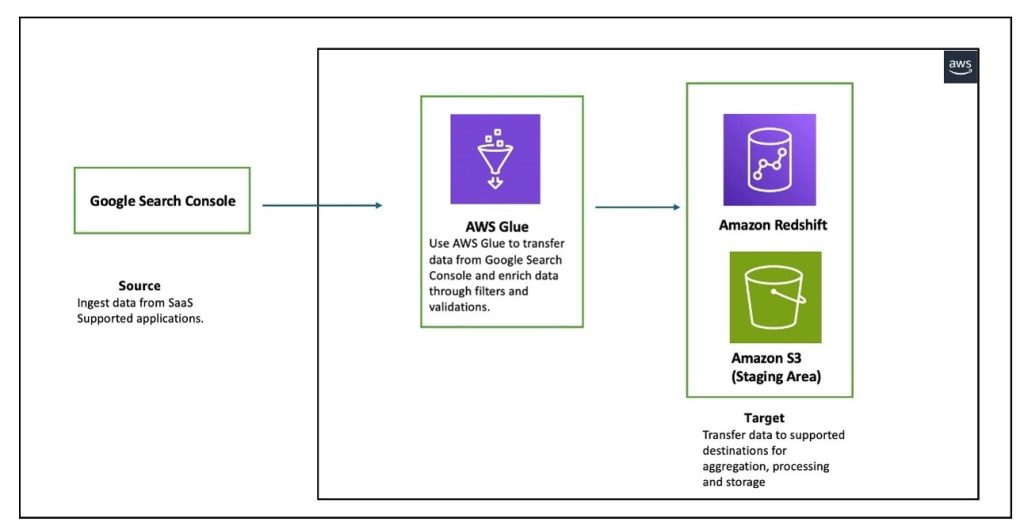

- Build a data pipeline from Google Search Console to Amazon Redshift using AWS Glueby Anirudh Chawla on February 17, 2026 at 5:48 pm

In this post, we explore how AWS Glue extract, transform, and load (ETL) capabilities connect Google applications and Amazon Redshift, helping you unlock deeper insights and drive data-informed decisions through automated data pipeline management. We walk you through the process of using AWS Glue to integrate data from Google Search Console and write it to Amazon Redshift.

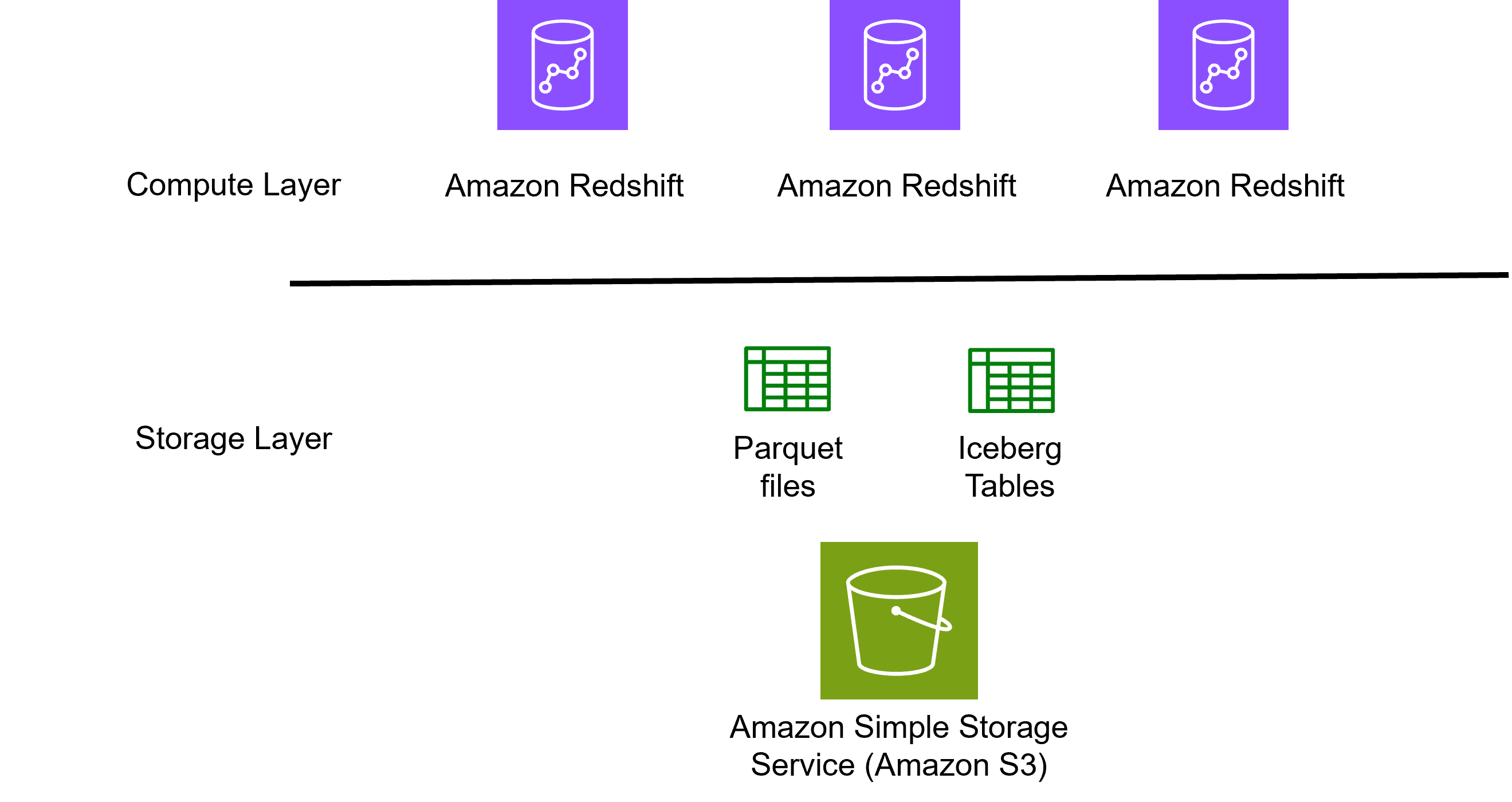

- Verisk cuts processing time and storage costs with Amazon Redshift and lakehouseby Karthick Shanmugam, Srinivasa Are on February 16, 2026 at 6:10 pm

Verisk, a catastrophe modeling SaaS provider serving insurance and reinsurance companies worldwide, cut processing time from hours to minutes-level aggregations while reducing storage costs by implementing a lakehouse architecture with Amazon Redshift and Apache Iceberg. If you’re managing billions of catastrophe modeling records across hurricanes, earthquakes, and wildfires, this approach eliminates the traditional compute-versus-cost trade-off by separating storage from processing power. In this post, we examine Verisk’s lakehouse implementation, focusing on four architectural decisions that delivered measurable improvements.

- Amazon OpenSearch Service 101: T-shirt size your domain for e-commerce searchby Abe Raghib on February 16, 2026 at 6:09 pm

While general sizing guidelines for OpenSearch Service domains are covered in detail in OpenSearch Service documentation, in this post we specifically focus on T-shirt-sizing OpenSearch Service domains for e-commerce search workloads. T-shirt sizing simplifies complex capacity planning by categorizing workloads into sizes like XS, S, M, L, XL based on key workload parameters such as data volume and query concurrency.

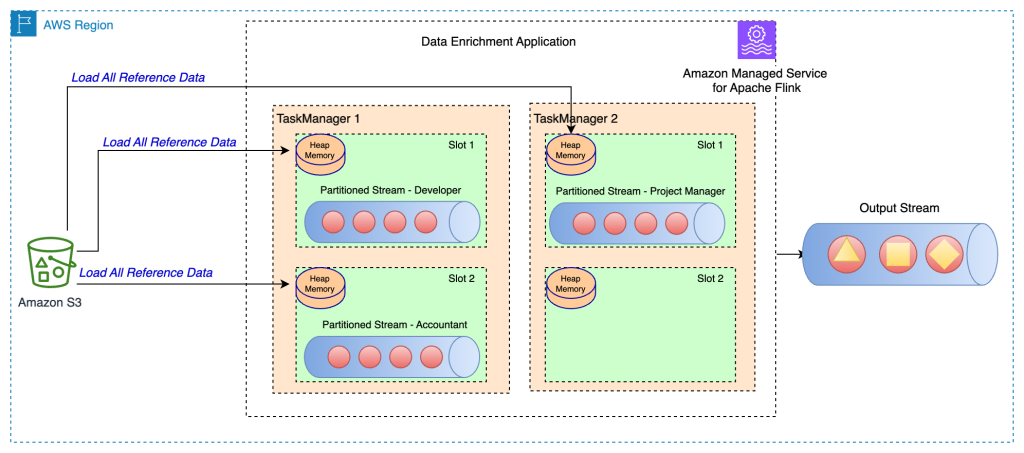

- Common streaming data enrichment patterns in Amazon Managed Service for Apache Flinkby Ali Alemi on February 13, 2026 at 7:46 pm

This post was originally published in March 2024 and updated in February 2026. Stream data processing allows you to act on data in real time. Real-time data analytics can help you have on-time and optimized responses while improving overall customer experience. Apache Flink is a distributed computation framework that allows for stateful real-time data processing. It

- Matching your Ingestion Strategy with your OpenSearch Query Patternsby Rakan Kandah on February 12, 2026 at 6:13 pm

In this post, we demonstrate how you can create a custom index analyzer in OpenSearch to implement autocomplete functionality efficiently by using the Edge n-gram tokenizer to match prefix queries without using wildcards.

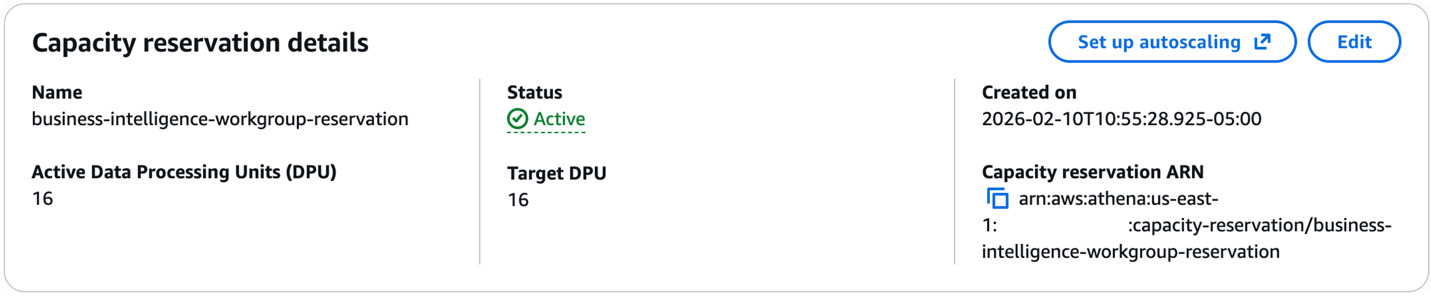

- Amazon Athena adds 1-minute reservations and new capacity control featuresby Manan Nayar on February 11, 2026 at 10:16 pm

Amazon Athena is a serverless interactive query service that makes it easy to analyze data using SQL. With Athena, there’s no infrastructure to manage, you simply submit queries and get results. Capacity Reservations is a feature of Athena that addresses the need to run critical workloads by providing dedicated serverless capacity for workloads you specify. In this post, we highlight three new capabilities that make Capacity Reservations more flexible and easier to manage: reduced minimums for fine-grained capacity adjustments, an autoscaling solution for dynamic workloads, and capacity cost and performance controls.

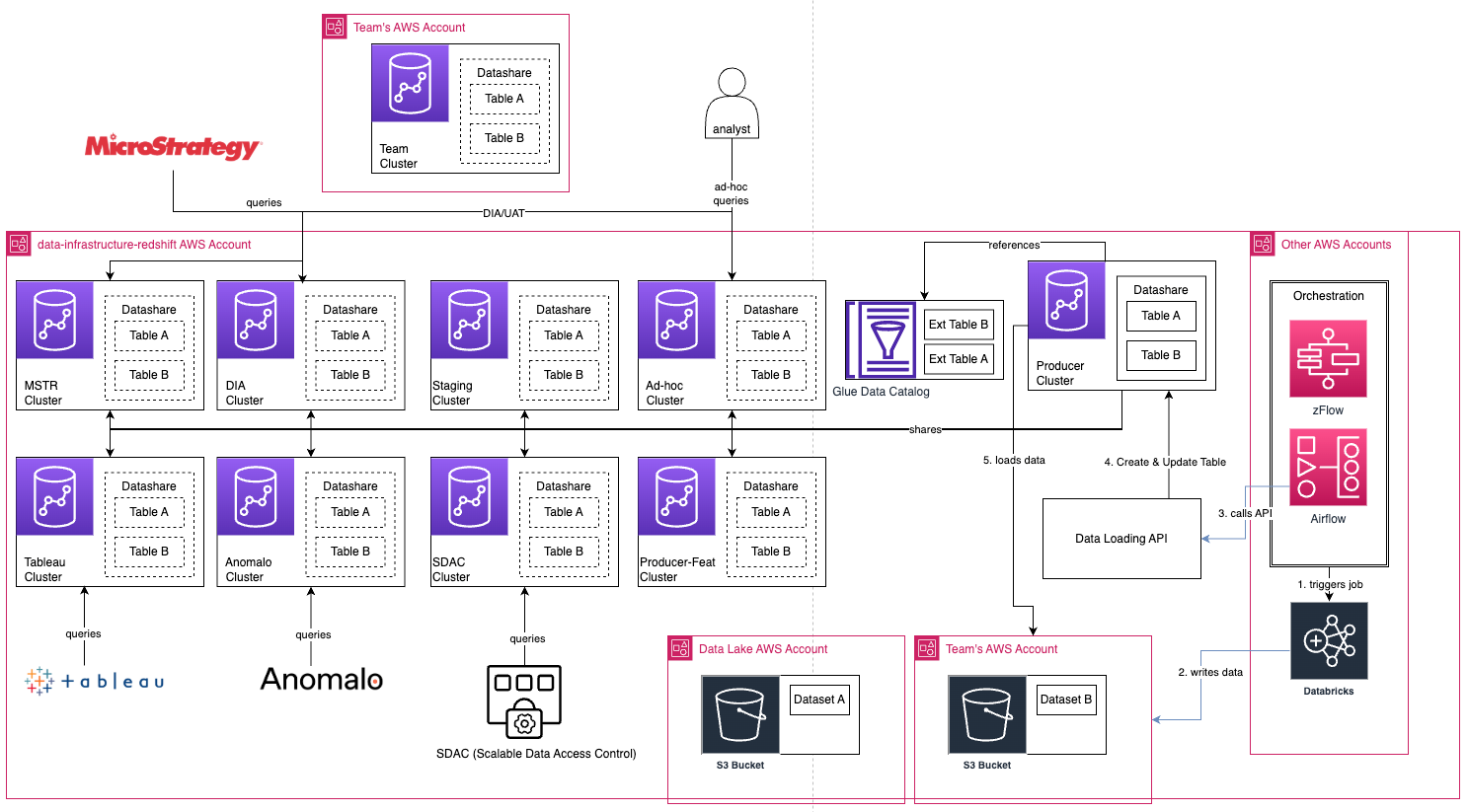

- How Zalando innovates their Fast-Serving layer by migrating to Amazon Redshiftby Srinivasan Molkuva, Sabri Ömür Yıldırmaz, Prasanna Sudhindrakumar, Paritosh Kumar Pramanick on February 10, 2026 at 11:37 pm

In this post, we show how Zalando migrated their fast-serving layer data warehouse to Amazon Redshift to achieve better price-performance and scalability.

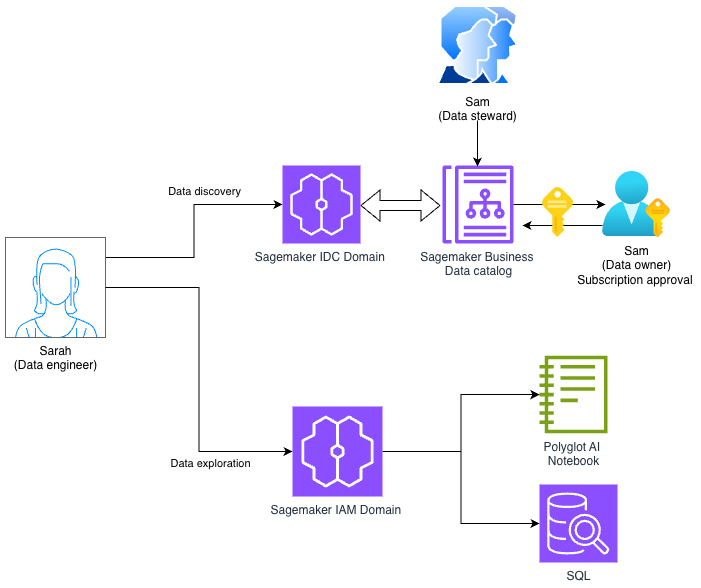

- Using Amazon SageMaker Unified Studio Identity center (IDC) and IAM-based domains togetherby Praveen Kumar on February 9, 2026 at 10:27 pm

In this post, we demonstrate how to access an Amazon SageMaker Unified Studio IDC-based domain with a new IAM-based domain using role reuse and attribute-based access control.

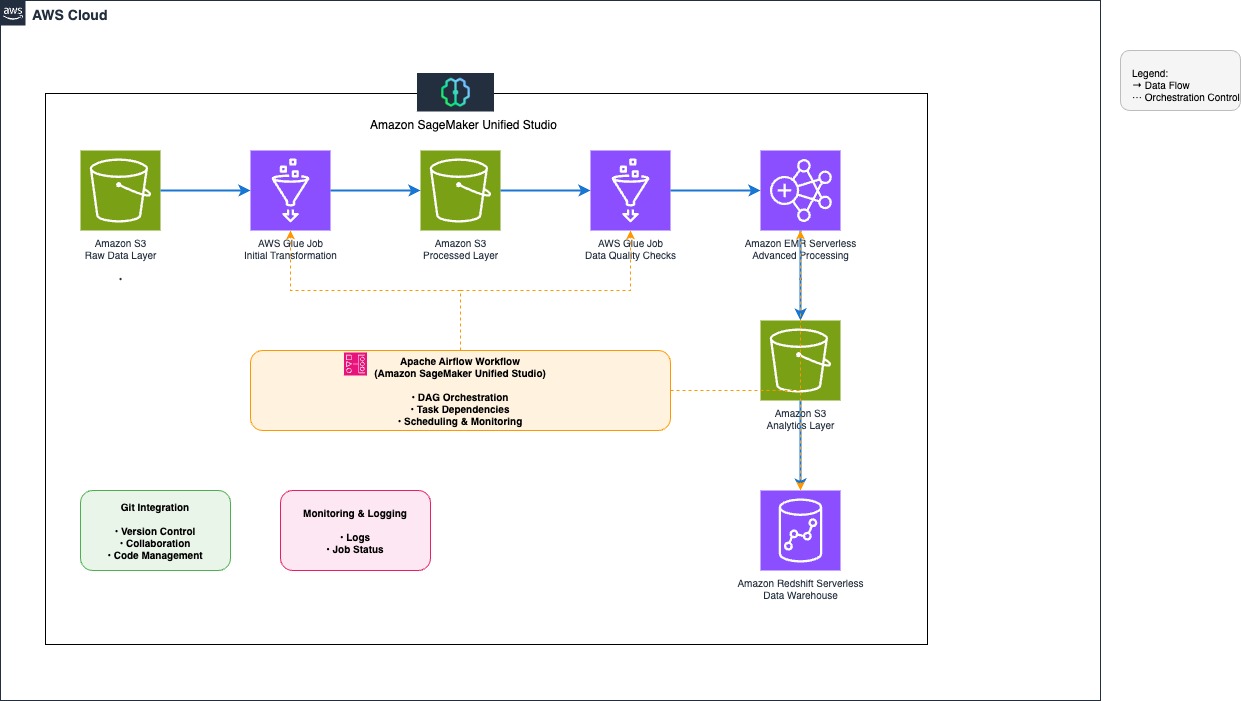

- Orchestrate end-to-end scalable ETL pipeline with Amazon SageMaker workflowsby Shubham Kumar on February 9, 2026 at 10:14 pm

This post explores how to build and manage a comprehensive extract, transform, and load (ETL) pipeline using SageMaker Unified Studio workflows through a code-based approach. We demonstrate how to use a single, integrated interface to handle all aspects of data processing, from preparation to orchestration, by using AWS services including Amazon EMR, AWS Glue, Amazon Redshift, and Amazon MWAA. This solution streamlines the data pipeline through a single UI.

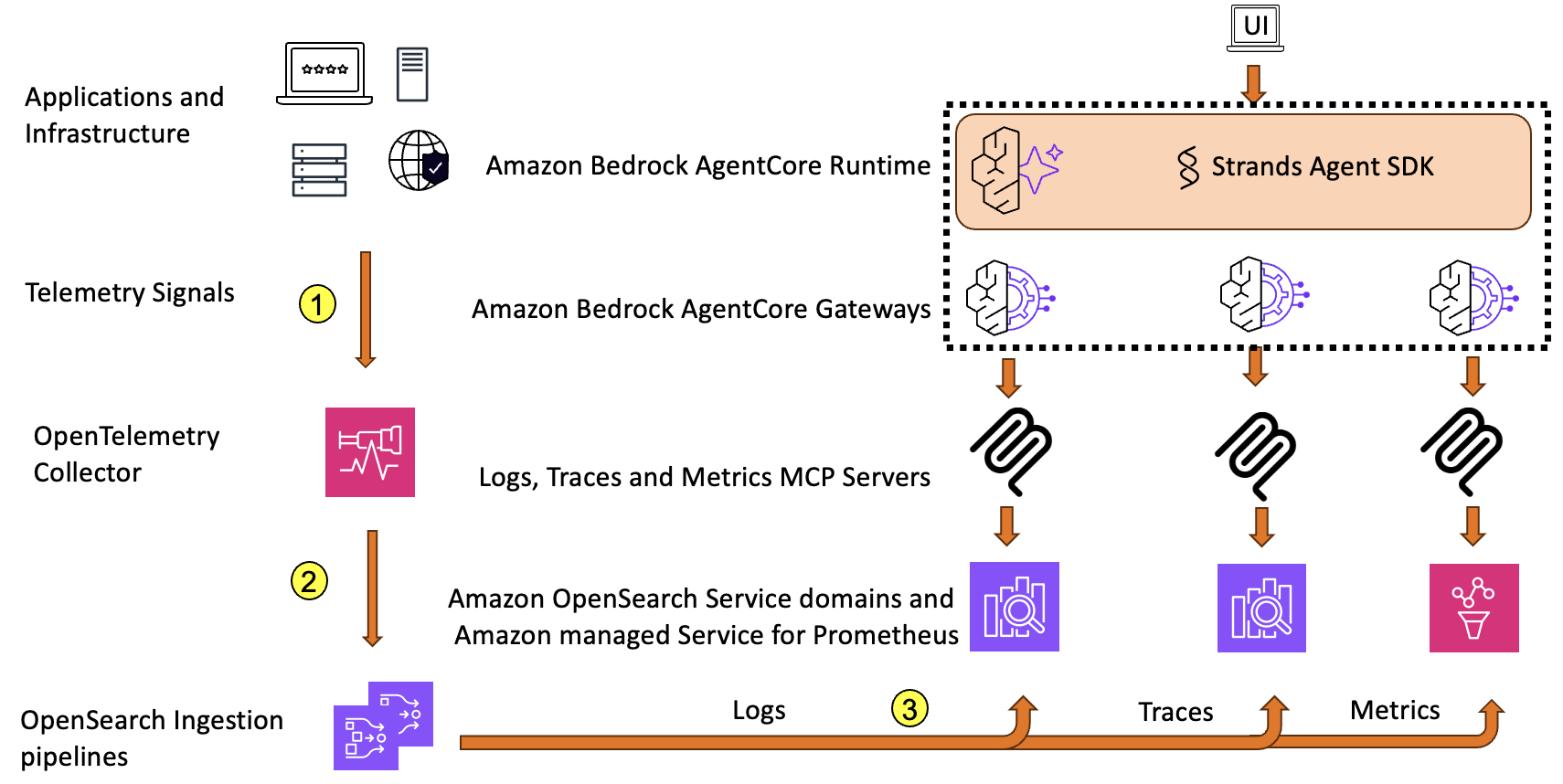

- Reduce Mean Time to Resolution with an observability agentby Muthu Pitchaimani on February 5, 2026 at 7:48 pm

In this post, we present an observability agent using OpenSearch Service and Amazon Bedrock AgentCore that can help surface root cause and get insights faster, handle multiple query-correlation cycles, and ultimately reduce MTTR even further.

- Amazon OpenSearch Ingestion 101: Set CloudWatch alarms for key metricsby Utkarsh Agarwal on February 5, 2026 at 7:47 pm

This post provides an in-depth look at setting up Amazon CloudWatch alarms for OpenSearch Ingestion pipelines. It goes beyond our recommended alarms to help identify bottlenecks in the pipeline, whether that’s in the sink, the OpenSearch clusters data is being sent to, the processors, or the pipeline not pulling or accepting enough from the source. This post will help you proactively monitor and troubleshoot your OpenSearch Ingestion pipelines.

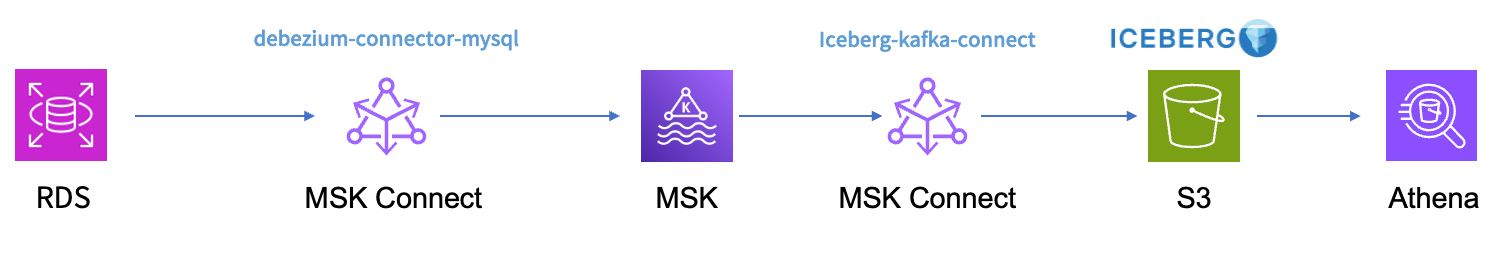

- Use Amazon MSK Connect and Iceberg Kafka Connect to build a real-time data lakeby Xiao Huang on February 3, 2026 at 6:42 pm

In this post, we demonstrate how to use Iceberg Kafka Connect with Amazon Managed Streaming for Apache Kafka (Amazon MSK) Connect to accelerate real-time data ingestion into data lakes, simplifying the synchronization process from transactional databases to Apache Iceberg tables.

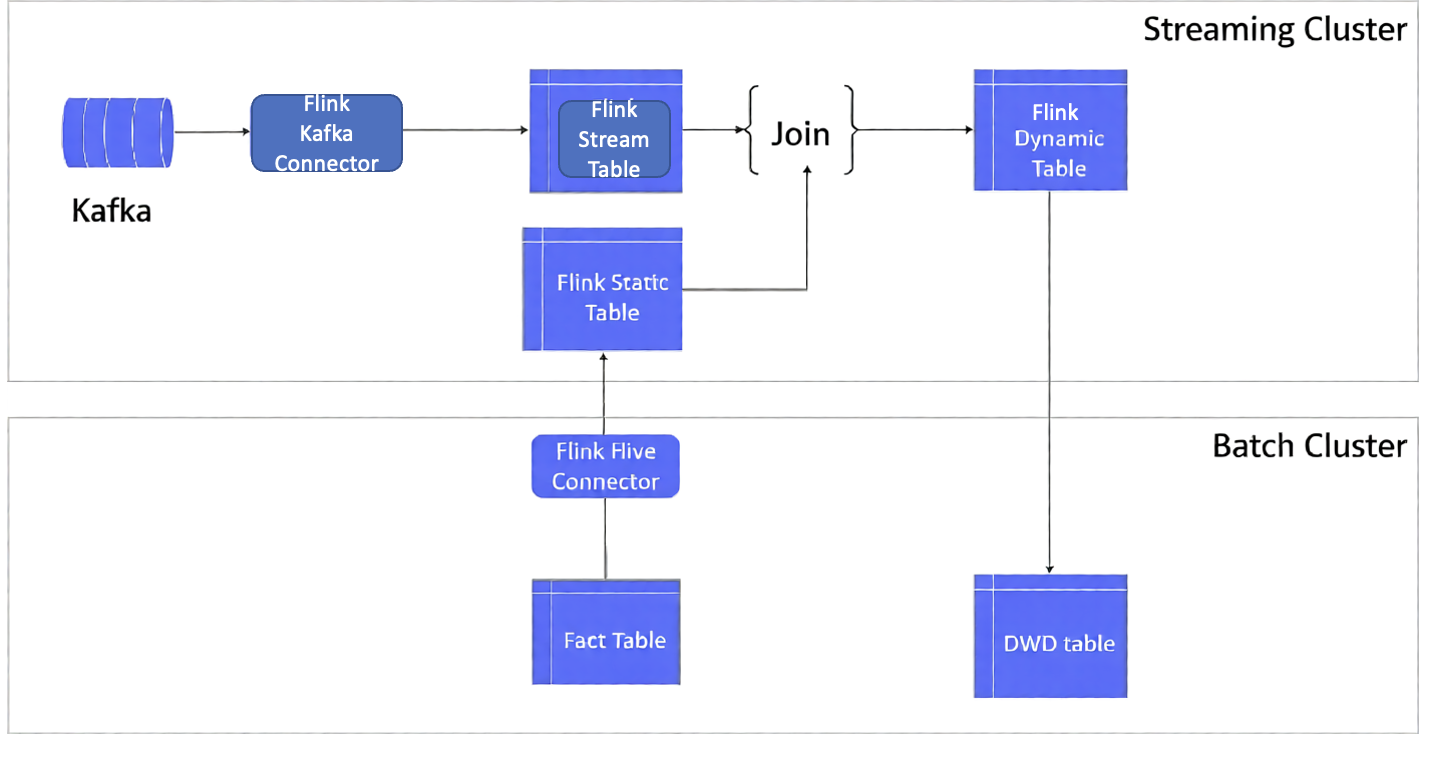

- Optimizing Flink’s join operations on Amazon EMR with Alluxioby Qingyuan Tang on February 3, 2026 at 6:41 pm

In this post, we show you how to implement real-time data correlation using Apache Flink to join streaming order data with historical customer and product information, enabling you to make informed decisions based on comprehensive, up-to-date analytics. We also introduce an optimized solution to automatically load Hive dimension table data into Alluxio Universal Flash Storage (UFS) through the Alluxio cache layer. This enables Flink to perform temporal joins on changing data, accurately reflecting the content of a table at specific points in time.