- Powerful AI is making facial recognition better at identifying youby Vijayan Asari, Professor of Electrical and Computer Engineering, University of Dayton on June 2, 2026 at 12:25 pm

New AI-based facial recognition techniques are reducing false positive and false negative matches.

- From gait analysis to fingerprint theft, how worried should we be about the latest advances in biometric technology?by Oli Buckley, Professor in Cyber Security, Loughborough Cyber Institute, Loughborough University on May 26, 2026 at 3:02 pm

You can wear a mask, pull up a hood, avoid looking at a camera – but you cannot easily change how you walk.

- How AI can lead to false arrests and wrongful convictionsby Maria Lungu, Postdoctoral Researcher of Law and Public Administration, University of Virginia on May 11, 2026 at 12:41 pm

Danger arises when law enforcement believes that AI models are retrieving certainties rather than generating likelihoods.

- Facial recognition data is a key to your identity – if stolen, you can’t just change the locksby Jonathan S. Weissman, Principal Lecturer of Cybersecurity, Rochester Institute of Technology on April 28, 2026 at 12:29 pm

You can change a stolen password or credit card, but you can’t reset your face when your biometric data is breached.

- Smart glasses with facial recognition could be devastating to sex workers and other vulnerable peopleby Brynn Colledge, PhD Student in Sociology, Teaching Assistant, University of Waterloo on March 31, 2026 at 2:00 pm

If Meta moves forward with plans to integrate facial recognition technologies into its AI-enabled smart glasses, some of us have more to lose than others.

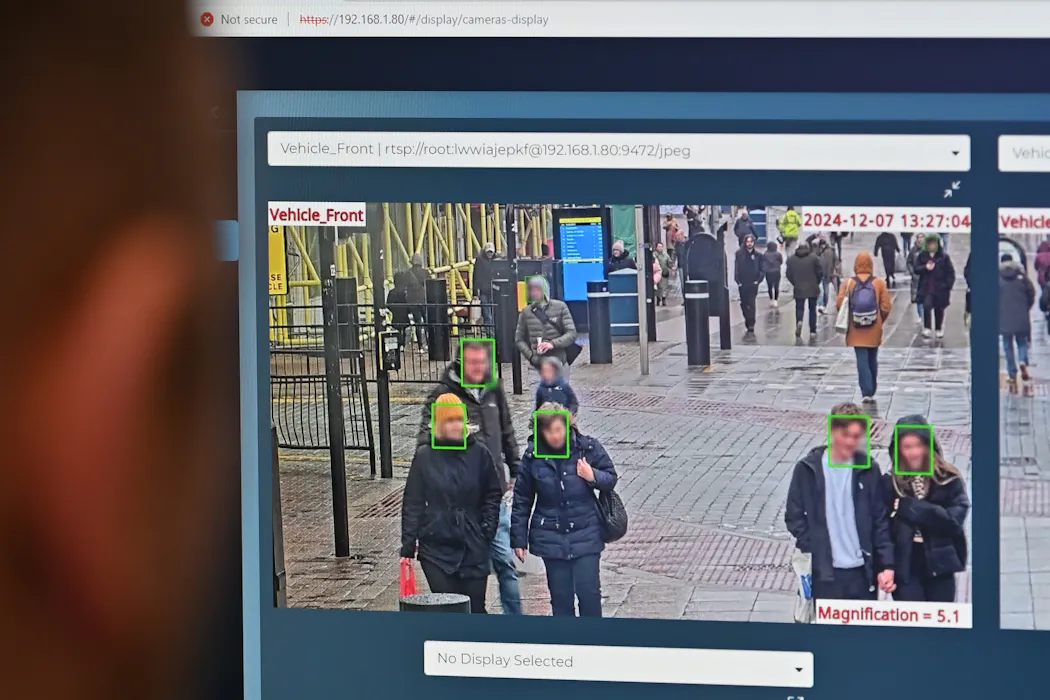

- Is someone watching you? Facial recognition tech is here and Canada offers little privacy protectionby Neil McArthur, Director, Centre for Professional and Applied Ethics, University of Manitoba on March 9, 2026 at 7:11 pm

Canada urgently needs stronger privacy laws, ones that deal explicitly with facial recognition.

- The Capture season three: experts in facial recognition and AI decipher the fact from the fictionby Eilidh Noyes, Lecturer in Cognitive Psychology, University of Leeds on March 6, 2026 at 11:31 am

It is not feasible, nor indeed ethical, to run a facial recognition system against all images on the internet.

- Why ICE’s body camera policies make the videos unlikely to improve accountability and transparencyby Stephanie Lessing, Adjunct Professor of Public Policy, UMass Boston on February 24, 2026 at 1:49 pm

For body cameras to function as transparency tools, wrongdoing would have to be consistently penalized, highlighting the consequences of noncompliance.

- Bunnings decision may open door to facial recognition surveillance free-for-allby Margarita Vladimirova, Sessional Academic, Faculty of Law, Monash University on February 10, 2026 at 4:13 am

Businesses using facial recognition cameras need customer consent – but a new ruling could open a loophole in the law.

- Facial recognition technology used by police is now very accurate – but public understanding lags behindby Kay Ritchie, Associate Professor in Cognitive Psychology, University of Lincoln on January 30, 2026 at 4:53 pm

It’s a common misconception that facial recognition technology captures and stores an image of your face.

- How facial recognition for bears can help ecologists manage wildlifeby Emily Wanderer, Associate Professor of Anthropology, University of Pittsburgh on January 7, 2026 at 1:37 pm

Recent advances in computer vision and other types of artificial intelligence offer an opportunity for facial recognition to apply to bears and other animals.

- How new asylum policies will affect child refugeesby Ala Sirriyeh, Senior Lecturer in Sociology, Lancaster University on November 24, 2025 at 1:43 pm

Child refugees could live their entire childhoods in Britain with no guarantee that they will remain in the place they have come to think of as home.

- How safe is your face? The pros and cons of having facial recognition everywhereby Joanne Orlando, Researcher, Digital Wellbeing, Western Sydney University on September 30, 2025 at 1:07 am

Before you scan your face, you might want to think twice about the risks.

- Kmart broke privacy laws by scanning customers’ faces. What did it do wrong, and why?by Margarita Vladimirova, PhD in Privacy Law and Facial Recognition Technology, Deakin University on September 18, 2025 at 7:42 am

The Privacy Commissioner found Kmart should have tried other options before facial recognition systems – and told customers what it was doing.

- How states are placing guardrails around AI in the absence of strong federal regulationby Anjana Susarla, Professor of Information Systems, Michigan State University on August 6, 2025 at 12:55 pm

With a potential ban of state regulation of AI soundly defeated, states are continuing to take the lead on protecting people from the technology’s potential harms – for now.

- AI helps tell snow leopards apart, improving population counts for these majestic mountain predatorsby Eve Bohnett, Assistant Scholar, Center for Landscape Conservation Planning, University of Florida on June 18, 2025 at 12:45 pm

Conservationists have to search rough terrain and thousands of automated photographs to find the elusive cats. Artificial intelligence can help them work more accurately and more efficiently.

- Banning face coverings, expanding facial recognition – how the UK government and police are eroding protest rightsby Daragh Murray, Senior Lecturer in International Human Rights Law at Queen Mary University of London, Queen Mary University of London on March 28, 2025 at 1:11 pm

Being identified was once only a possibility, now it is a near certainty.

- Surveillance tech is changing our behaviour – and our brainsby Kiley Seymour, Associate Professor of Neuroscience and Behaviour, University of Technology Sydney on January 14, 2025 at 12:33 am

A new study shows that simply knowing we are being watched can unconsciously heighten our awareness of other people’s gaze.

- How can Australia actually keep young people off social media and porn sites? A new trial will test 3 optionsby Toby Murray, Associate Professor of Cybersecurity, School of Computing and Information Systems, The University of Melbourne on December 2, 2024 at 2:52 am

The trial is testing age verification, age estimation and age inference. All three options come with problems.

- Five ways you might already encounter AI in cities (and not realise it)by Noortje Marres, Professor in Science, Technology and Society, University of Warwick on November 26, 2024 at 1:20 pm

From facial recognition to delivery drones, cities around the UK are already trialling AI tech.

- AI and criminal justice: How AI can support — not undermine — justiceby Benjamin Perrin, Professor of Law, University of British Columbia on November 19, 2024 at 5:06 pm

While AI promises to transform criminal justice by increasing operational efficiency and improving public safety, it also comes with risks around privacy, accountability, fairness and human rights.

- Bunnings breached privacy law by scanning customers’ faces – but this loophole lets other shops keep doing itby Margarita Vladimirova, PhD in Privacy Law and Facial Recognition Technology, Deakin University on November 19, 2024 at 5:36 am

Despite the ruling against Bunnings, Australian businesses can continue to collect your biometric information without your explicit consent by simply putting up signs.

- In your face: our acceptance of facial recognition technology depends on who is doing it – and whereby Nicholas Dynon, Doctoral Candidate, Centre for Defence & Security Studies, Te Kunenga ki Pūrehuroa – Massey University on November 8, 2024 at 1:41 am

While many people are comfortable with using facial recognition technology on their phone, they are less happy when it’s the government or private groups identifying them.

- What do people think about smartglasses? New research reveals a complicated pictureby Fareed Kaviani, Research fellow, Emerging Technologies Research Lab, Monash University on November 7, 2024 at 11:28 pm

Views differ between owners and non-owners of smartglasses. But both groups agree the technology can help people.

- Wondering what AI actually is? Here are the 7 things it can do for youby Sandra Peter, Director of Sydney Executive Plus, University of Sydney on October 1, 2024 at 11:53 pm

AI impacts many things beyond the flashy chatbots – often in ways that quietly improve everyday processes.

Facial Recognition

We are an ethical website cyber security team and we perform security assessments to protect our clients.